Executive Summary

- Decomposes complex tasks into sequential sub-tasks to reduce hallucination and increase output precision.

- Enables stateful logic within stateless LLM environments by passing outputs as context for subsequent calls.

- Optimizes token usage and latency by focusing specific prompts on narrow data extraction or transformation goals.

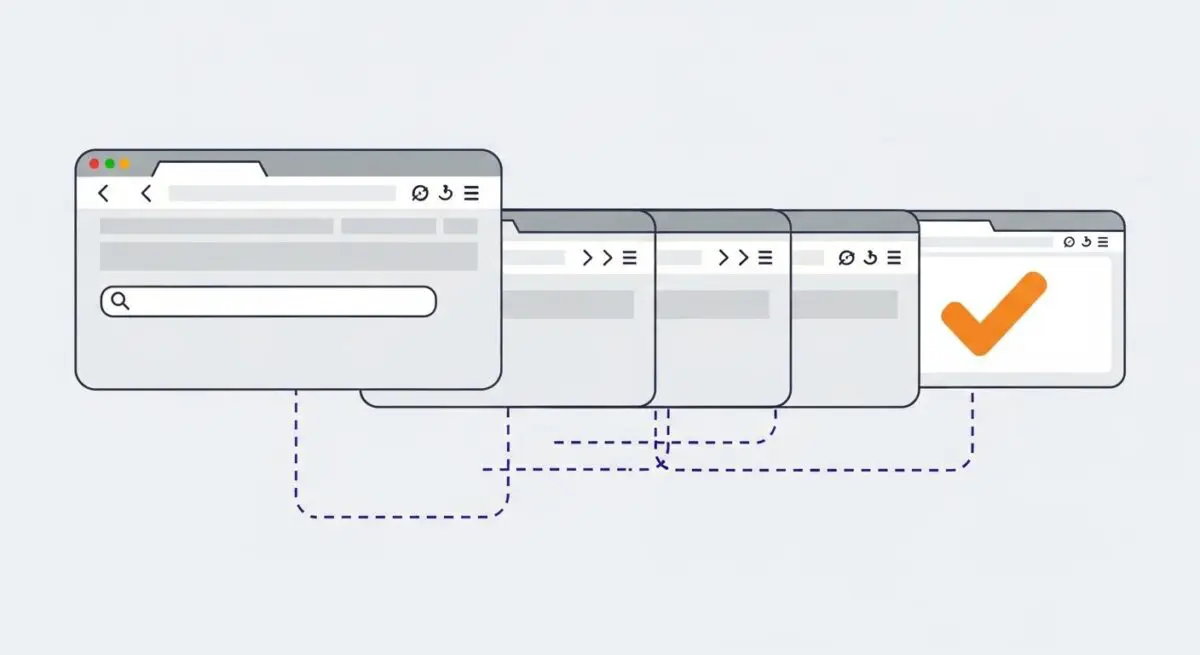

What is Prompt Chaining?

Prompt chaining is a sophisticated architectural pattern in AI engineering where the output of one Large Language Model (LLM) call is programmatically passed as the input or context for a subsequent prompt. This technique moves away from single-shot prompting, instead decomposing a complex objective into a series of discrete, manageable sub-tasks. By modularizing the workflow, developers can enforce specific constraints at each stage, ensuring higher fidelity and adherence to technical requirements.

In the context of autonomous agents and data pipelines, prompt chaining facilitates state management within inherently stateless LLM environments. Each link in the chain can be optimized for a specific function—such as data extraction, sentiment analysis, or structural transformation—before the final payload is synthesized. This granular control is essential for building production-grade applications that require consistent, structured data outputs like JSON or XML.

The Real-World Analogy

Consider the operation of a professional architectural firm. A single architect does not simply sit down and produce a finished skyscraper blueprint in one sitting. Instead, the process is chained: first, a surveyor provides site data; then, a structural engineer calculates load-bearing requirements; next, an aesthetic designer creates the facade; and finally, a project manager synthesizes these inputs into a master plan. Each specialist focuses on a narrow domain, using the previous specialist’s output to ensure the final structure is both safe and functional.

Why is Prompt Chaining Critical for Autonomous Workflows and AI Content Ops?

Prompt chaining is the backbone of scalable AI Content Ops because it significantly mitigates the risk of model hallucination and instruction drift. When an LLM is asked to perform too many tasks in a single prompt, the attention mechanism often fails to prioritize all constraints equally. Chaining ensures that the model’s full cognitive capacity is applied to one specific transformation at a time, which is vital for maintaining brand voice and factual accuracy in programmatic SEO.

Furthermore, chaining enables conditional logic and error handling within automated pipelines. If an intermediate prompt fails to produce a valid JSON payload, the system can trigger a retry or a fallback sequence rather than proceeding with corrupted data. This serverless-friendly approach optimizes token consumption and reduces latency by preventing the execution of downstream tasks when upstream dependencies are not met.

Best Practices & Implementation

- Implement Structured Output Validation: Use Pydantic or JSON Schema to validate the output of each chain link before passing it to the next to prevent cascading errors.

- Minimize Context Carryover: Only pass the essential data required for the next step to keep the context window clean and reduce API costs.

- Use Specialized System Instructions: Tailor the system prompt for each link in the chain to focus exclusively on the sub-task at hand (e.g., “You are a data extractor” vs “You are a copy editor”).

- Incorporate Human-in-the-Loop (HITL) Checkpoints: For high-stakes workflows, insert a manual review step between critical links in the chain to ensure quality control.

Common Mistakes to Avoid

One frequent error is creating excessive latency by over-chaining; if a task can be reliably handled in two steps, do not use five. Another common pitfall is failing to account for token accumulation; as context is passed down the chain, the total token count can grow if not strictly managed. Finally, many developers neglect to implement state recovery, where a failure in the middle of a long chain requires a full restart rather than resuming from the last successful checkpoint.

Conclusion

Prompt chaining is a fundamental requirement for moving beyond basic AI chat interfaces toward robust, autonomous data pipelines that drive modern SEO and digital automation.