Executive Summary

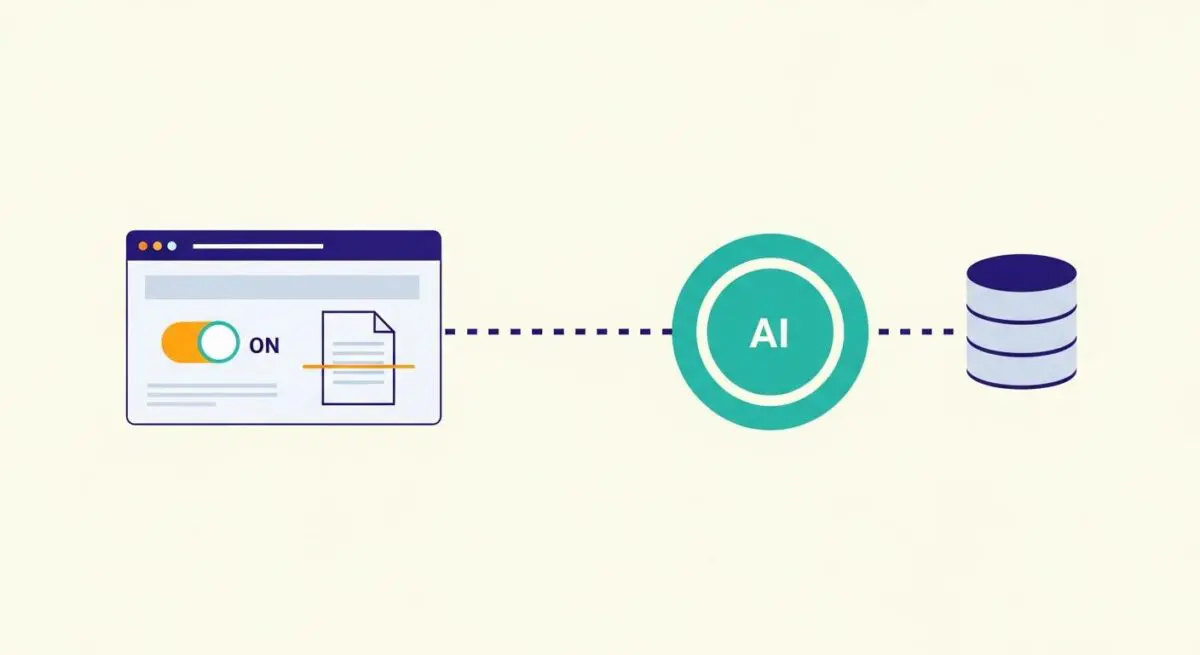

- Human-in-the-loop (HITL) functions as a critical validation layer for AI-generated outputs that fall below predefined confidence score thresholds.

- The architecture enables Reinforcement Learning from Human Feedback (RLHF), allowing human corrections to be fed back into the model for iterative optimization.

- Strategic HITL implementation prevents the propagation of hallucinations and ensures brand compliance in high-scale programmatic SEO and content operations.

What is Human-in-the-loop?

Human-in-the-loop (HITL) is a technical framework in automation engineering where human intervention points are strategically integrated into an otherwise autonomous workflow. In the context of AI and machine learning, HITL serves as a quality control mechanism that leverages human intelligence to validate, correct, or refine data processed by an algorithm. This is particularly vital when dealing with probabilistic outputs from Large Language Models (LLMs) where the margin for error is low.

From a systems architecture perspective, HITL transforms a linear, fully automated pipeline into a semi-autonomous feedback loop. When an AI agent encounters a task with a low confidence score or a high-risk edge case, the system triggers a webhook or pauses the execution state, routing the payload to a human operator. Once the human provides the necessary input or approval, the workflow resumes, often utilizing the human’s correction as a new data point for future model fine-tuning.

The Real-World Analogy

Imagine an elite restaurant kitchen where a highly advanced robotic system handles the bulk of the food preparation—chopping vegetables, searing meats, and plating dishes with incredible speed. However, before any plate leaves the kitchen to be served to a guest, an Executive Chef stands at the pass. The Chef doesn’t do the manual labor but tastes the sauce, adjusts the seasoning, and gives the final nod of approval. The robot does 95% of the work, but the Chef’s 5% intervention ensures the quality meets the restaurant’s standards and prevents a sub-par dish from ever reaching the customer.

Why is Human-in-the-loop Critical for Autonomous Workflows and AI Content Ops?

In the era of programmatic SEO and AI-driven content operations, HITL is the primary safeguard against algorithmic hallucination and brand degradation. Fully autonomous pipelines are often stateless and lack the contextual nuance required for high-stakes decision-making. By implementing HITL, organizations can scale their output volume without sacrificing technical accuracy or editorial integrity.

Furthermore, HITL is essential for Reinforcement Learning from Human Feedback (RLHF). When a human corrects an AI-generated meta description or technical summary, that delta—the difference between the AI’s output and the human’s correction—is captured. This data is invaluable for fine-tuning proprietary models, effectively turning every human intervention into a training session that improves the system’s future autonomy. In serverless architectures, HITL manages the transition between automated API calls and manual review queues, ensuring that data pipelines remain robust even when the AI encounters unforeseen variables.

Best Practices & Implementation

- Define Confidence Thresholds: Configure your AI agents to return a confidence score with every payload. If the score falls below a specific percentage (e.g., 85%), automatically route the task to a human review queue via a webhook.

- Asynchronous Workflow Management: Use state-aware automation platforms to pause executions. This prevents server timeouts while waiting for human input, allowing the system to resume the process once the human validation is received.

- Optimize the Reviewer UI: Design a lean interface for human operators that highlights only the specific data points requiring attention. Reducing cognitive load for the human reviewer is key to maintaining high throughput.

- Data Logging for Fine-Tuning: Ensure every human correction is logged in a structured JSON format. This creates a “gold standard” dataset that can be used to fine-tune your LLMs, gradually reducing the need for human intervention over time.

Common Mistakes to Avoid

A frequent error is creating operational bottlenecks by requiring human intervention on every single task, which negates the efficiency gains of automation. Another critical mistake is failing to provide the human reviewer with the original context or the prompt used by the AI, leading to inconsistent corrections. Finally, many brands neglect to feed human corrections back into their training data, missing the opportunity to improve the underlying model’s performance.

Conclusion

Human-in-the-loop is not a sign of automation failure, but rather a sophisticated engineering strategy for maintaining high-fidelity outputs in AI-driven systems. By balancing algorithmic speed with human oversight, organizations can achieve scalable, reliable, and technically accurate content operations.