Executive Summary

- Tokenization is the fundamental preprocessing step that converts raw natural language into discrete numerical units for Large Language Model processing.

- The implementation of subword algorithms like BPE and WordPiece optimizes vocabulary efficiency and mitigates out-of-vocabulary (OOV) errors.

- Tokenization efficiency directly impacts context window management, computational overhead, and entity recognition within Generative Engine Optimization (GEO).

What is Tokenization?

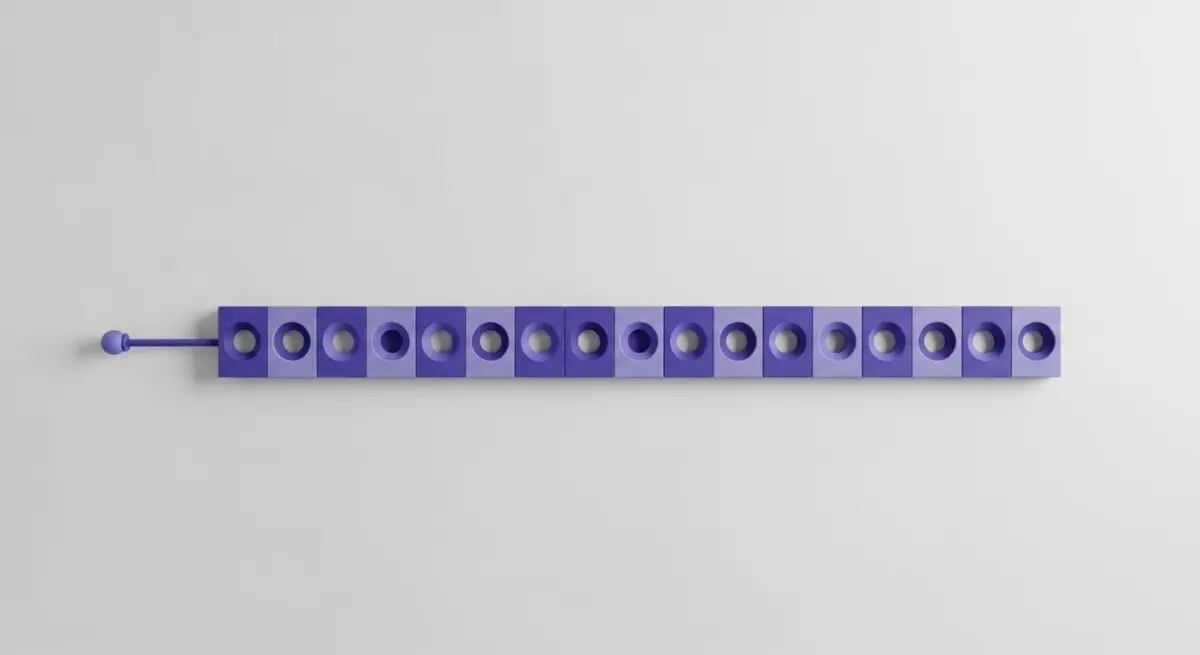

Tokenization is the foundational process of segmenting raw natural language strings into discrete atomic units called tokens. These tokens serve as the primary input for transformer-based architectures, where they are mapped to high-dimensional vector embeddings within a latent space. In the context of Large Language Models (LLMs), tokenization is not merely a simple split by whitespace; it involves sophisticated algorithms that determine how to best represent text for mathematical processing and neural network ingestion.

Modern LLMs typically utilize subword tokenization algorithms, such as Byte Pair Encoding (BPE), WordPiece, or SentencePiece. These methods balance the granularity of character-level models with the semantic richness of word-level models. By breaking rare or complex words into common sub-components, the model can maintain a fixed-size vocabulary while still being able to process an infinite variety of linguistic inputs without encountering out-of-vocabulary (OOV) errors. For instance, the word “tokenization” might be split into “token” and “ization” to optimize storage and semantic association.

From a technical standpoint, each token corresponds to a specific index in the model’s predefined vocabulary. When an LLM processes a query, it first converts the input string into a sequence of these indices. This sequence is then passed through embedding layers to generate the numerical tensors used in self-attention calculations. Consequently, the efficiency and accuracy of the tokenizer directly dictate the model’s ability to interpret context, manage memory, and generate coherent responses.

The Real-World Analogy

Imagine a modular shipping container system at a global port. You cannot simply load a fully constructed, irregularly shaped house onto a cargo ship; it is too large and non-standard for the handling equipment. Instead, the house must be disassembled into standardized, numbered crates. Each crate represents a token. The ship (the LLM) is designed to move and stack these specific crates with extreme efficiency. Because the crates are standardized, the ship can transport any type of structure—whether it is a house, a car, or a piece of machinery—by simply organizing the specific sequence of crates. Tokenization is the process of breaking down complex human language into these standardized units that the machine’s infrastructure is built to handle.

Why is Tokenization Important for GEO and LLMs?

Tokenization is a critical factor in Generative Engine Optimization (GEO) because it governs the “information density” of content within a model’s limited context window. Since LLMs have a finite token capacity (e.g., 128k tokens for GPT-4), content that is tokenized inefficiently—using excessive filler words or non-standard characters—reduces the amount of relevant information the model can process simultaneously. This directly impacts the model’s ability to synthesize complex sources in Retrieval-Augmented Generation (RAG) pipelines and can lead to the loss of critical context during truncation.

Furthermore, tokenization influences entity authority and source attribution. If a brand name or technical term is tokenized into obscure, non-semantic fragments, the model’s internal attention mechanism may struggle to associate that entity with its relevant context. Conversely, entities that align well with the tokenizer’s vocabulary are more likely to be accurately retrieved and cited. For AI Search, ensuring that your core content is “token-friendly” means maximizing the semantic weight of every unit processed by the generative engine, thereby increasing the likelihood of high-ranking visibility in AI-generated summaries and citations.

Best Practices & Implementation

- Optimize for Token Density: Eliminate redundant linguistic structures and filler content to ensure that the most critical semantic information occupies the smallest possible token footprint within the context window.

- Standardize Technical Nomenclature: Use industry-standard terms and consistent brand naming to ensure the tokenizer produces stable, recognizable subword sequences for your core entities.

- Monitor Context Window Limits: In RAG implementations, proactively calculate the token count of retrieved document chunks to prevent the truncation of essential source citations or metadata.

- Prioritize UTF-8 Encoding: Ensure all web content uses standard encoding to avoid “byte-fallback” tokenization, which can significantly increase the token count for special characters and reduce processing efficiency.

Common Mistakes to Avoid

One frequent error is the use of idiosyncratic jargon or invented brand names that force the tokenizer to break words into single-character fragments, which dilutes the semantic relevance of the term within the LLM’s attention layers. Another common mistake is ignoring the token-to-word ratio when designing metadata; a description that fits character limits may still exceed token limits if it contains complex punctuation or non-standard symbols. Finally, many organizations fail to test their content against specific model tokenizers (like Tiktoken), leading to unexpected API costs and degraded performance in AI-driven search environments.

Conclusion

Tokenization is the essential bridge between human language and machine computation, serving as the primary constraint on context, cost, and semantic accuracy in modern AI search systems.