Executive Summary

- Enables Large Language Models (LLMs) to perform accurate categorization tasks using minimal labeled examples provided within the prompt context.

- Eliminates the computational overhead of full model fine-tuning by leveraging the in-context learning capabilities of transformer architectures.

- Critical for Generative Engine Optimization (GEO) as it dictates how AI agents interpret and classify brand entities and content intent.

What is Few-Shot Classification?

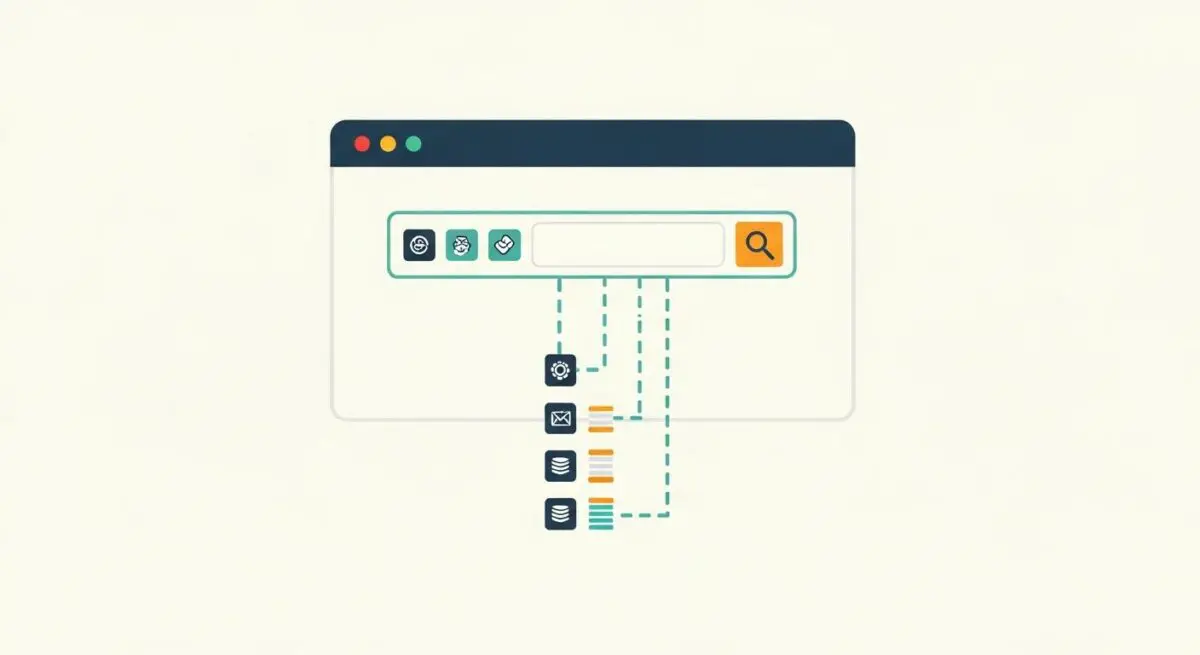

Few-shot classification is a machine learning paradigm where a model is trained or prompted to categorize new data points into specific classes based on a very limited number of training examples, typically ranging from one to five (N-shots). Unlike traditional supervised learning, which requires thousands of labeled instances to achieve high accuracy, few-shot classification leverages meta-learning or in-context learning. In the context of Large Language Models (LLMs), this involves providing the model with a few input-output pairs within the prompt to define the desired task logic.

Technically, few-shot classification relies on the model’s ability to map new inputs to a latent representation space where they can be compared against the provided examples. By identifying patterns and semantic similarities between the “shots” and the query, the LLM can generalize the classification rule without updating its underlying weights. This makes it an essential technique for specialized domains where data is scarce or where rapid task adaptation is required.

The Real-World Analogy

Imagine you are a hiring manager who has never seen a specific type of specialized coding language. Instead of reading a 500-page manual, you are shown three resumes of “perfect candidates” and three resumes of “unqualified candidates.” By quickly observing the common traits in those few examples, you can immediately begin sorting a stack of 100 new resumes with high accuracy. Few-shot classification is the AI’s version of this rapid pattern recognition; it doesn’t need the whole manual, just a few high-quality references to understand the goal.

Why is Few-Shot Classification Important for GEO and LLMs?

For Generative Engine Optimization (GEO), few-shot classification is a foundational mechanism that determines how AI search engines like Perplexity or ChatGPT categorize and retrieve your content. When these systems process vast amounts of web data, they often use few-shot techniques to identify entity authority, sentiment, and content relevance. If your content is structured in a way that aligns with the few-shot patterns the LLM has been “primed” with, your brand is more likely to be classified as a primary source or a top-tier recommendation.

Furthermore, few-shot classification impacts Source Attribution. By providing clear, structured examples of how information should be categorized, developers can guide AI agents to recognize specific brand attributes more effectively. This reduces the risk of the model misclassifying your services or products, thereby improving visibility in AI-generated responses and RAG (Retrieval-Augmented Generation) pipelines.

Best Practices & Implementation

- Select Representative Examples: Ensure the few shots provided cover the variance of the target classes to prevent the model from developing a narrow or biased classification logic.

- Maintain Structural Consistency: Use identical formatting for every example (e.g., JSON or clear key-value pairs) to minimize noise and help the model focus on the semantic content.

- Optimize Example Ordering: Be aware of “recency bias,” where LLMs may give more weight to the last example provided; place the most critical or complex examples strategically within the prompt.

- Utilize Clear Delimiters: Use distinct markers like “###” or “—” to separate examples from the actual query, ensuring the model distinguishes between the training context and the task at hand.

Common Mistakes to Avoid

A frequent error is providing imbalanced examples, such as giving three examples of “Class A” and only one of “Class B,” which often leads the LLM to favor the overrepresented category. Another mistake is using examples that are too similar to each other, which fails to define the boundaries of the classification task. Lastly, many professionals ignore the token cost; adding too many shots can consume the context window and increase latency without providing a proportional increase in accuracy.

Conclusion

Few-shot classification is a vital tool for optimizing AI interactions, allowing for precise data categorization with minimal overhead. Mastering this technique ensures that content is correctly interpreted and prioritized by modern generative search engines.