Executive Summary

- ETL (Extract, Transform, Load) is the foundational data engineering pipeline used to ingest unstructured data into vector databases for Retrieval-Augmented Generation (RAG).

- The transformation phase is critical for AI, involving semantic chunking, metadata enrichment, and high-dimensional vector embedding generation.

- Optimized ETL pipelines directly improve Generative Engine Optimization (GEO) by ensuring data freshness, accuracy, and high-fidelity source attribution.

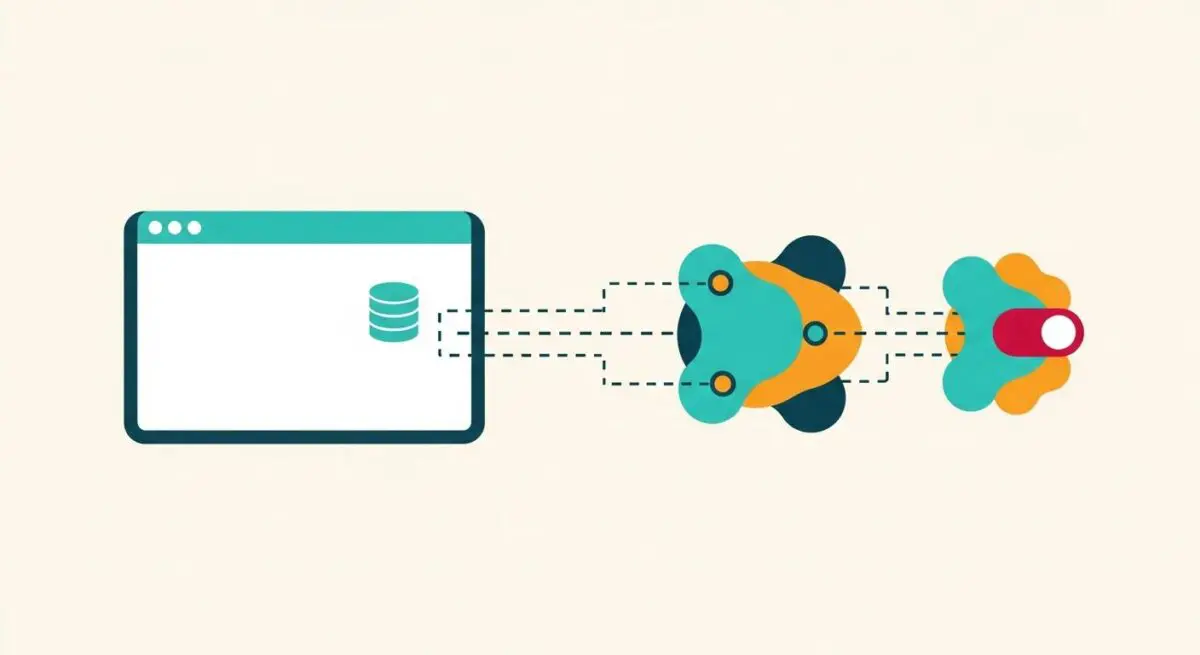

What is ETL Process?

The ETL (Extract, Transform, Load) process is a three-stage data integration framework used to synthesize data from disparate sources into a unified, structured environment. In the context of modern Artificial Intelligence and Large Language Models (LLMs), ETL has evolved from traditional business intelligence applications into a critical pipeline for feeding vector databases. The Extraction phase involves harvesting raw data from sources such as web crawls, APIs, or internal documents. The Transformation phase applies logic to clean, normalize, and convert this data into a machine-readable format, which in AI workflows includes tokenization and embedding generation. Finally, the Loading phase pushes the processed data into a target system, typically a vector store like Pinecone, Weaviate, or Milvus.

For AI Architects, the ETL process is the primary mechanism for maintaining the “ground truth” in RAG systems. Unlike legacy data warehousing, AI-centric ETL must handle high-velocity unstructured data and maintain the semantic context of the information. This ensures that when an AI agent queries the database, it retrieves the most relevant and contextually accurate information available, directly impacting the quality of the generated output.

The Real-World Analogy

To understand the ETL process, imagine a high-end professional kitchen preparing a complex tasting menu. Extraction is the process of sourcing raw ingredients from various farms, markets, and suppliers. Transformation is the culinary preparation: washing the vegetables, butchering the proteins, and precisely seasoning the components so they are ready for the final dish. Without this step, the ingredients are unusable for the chef. Loading is the final plating and organization of the prepared ingredients in the kitchen’s service line, where the head chef (the LLM) can quickly access them to create a finished meal for the guest. Just as a chef cannot produce a five-star meal from unwashed, raw ingredients, an AI cannot produce accurate answers from unrefined, raw data.

Why is ETL Process Important for GEO and LLMs?

The efficacy of Generative Engine Optimization (GEO) is heavily dependent on how effectively an organization’s data is processed through ETL. LLMs do not “know” everything; they rely on retrieved context to provide specific, factual answers. If the ETL process is flawed—for instance, if the transformation phase strips away critical metadata or uses poor chunking strategies—the AI will fail to attribute sources correctly or may hallucinate based on fragmented data. High-quality ETL ensures that entities are clearly defined and that the relationship between data points is preserved via vector embeddings.

Furthermore, the speed of the ETL pipeline determines the “freshness” of the data available to AI search engines. In the era of Perplexity and ChatGPT Search, being the most current and authoritative source is a primary ranking factor. A robust ETL infrastructure allows brands to update their technical documentation or product data in real-time, ensuring that AI agents always serve the most accurate version of the brand’s information to users.

Best Practices & Implementation

- Implement Semantic Chunking: Instead of fixed-length character limits, use natural language processing to break text at logical boundaries (paragraphs or sections) to preserve context for embeddings.

- Enrich with Structured Metadata: During the transformation phase, inject Schema.org attributes and source URLs into the metadata fields to facilitate better source attribution in AI citations.

- Automate Data Validation: Use automated checks to identify and remove duplicate content or low-quality noise before it reaches the vector database to maintain high signal-to-noise ratios.

- Optimize Embedding Models: Ensure the embedding model used during the transformation phase matches the retrieval model to maintain high cosine similarity scores.

Common Mistakes to Avoid

One frequent error is neglecting the Transformation phase by loading raw, uncleaned HTML into a vector store, which introduces noise and degrades retrieval accuracy. Another common mistake is failing to implement a recursive update strategy, leading to “stale” data where the AI continues to reference outdated information because the ETL pipeline did not properly overwrite or version the old records. Finally, many organizations ignore the importance of metadata, treating the vector store as a black box rather than a structured library that requires indexing for efficient retrieval.

Conclusion

The ETL process is the backbone of data integrity for AI-driven search and RAG architectures. By mastering the extraction, transformation, and loading of data, organizations can significantly enhance their visibility and authority within generative engines.