Executive Summary

- A data pipeline is a structured sequence of automated processes that ingest, transform, and transport raw data into a target system for analysis or model training.

- In the context of AI and GEO, pipelines are critical for maintaining the freshness and accuracy of Retrieval-Augmented Generation (RAG) indices.

- Optimizing data pipelines ensures that Large Language Models (LLMs) access high-integrity, structured information, directly influencing source attribution and visibility.

What is Data Pipeline?

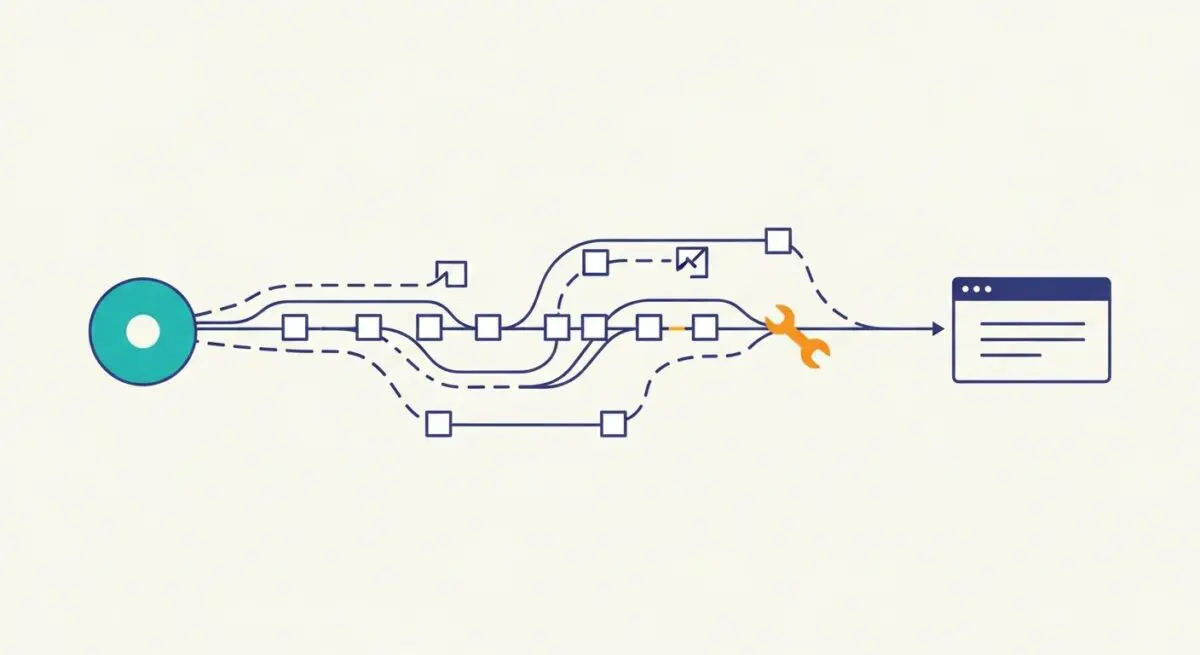

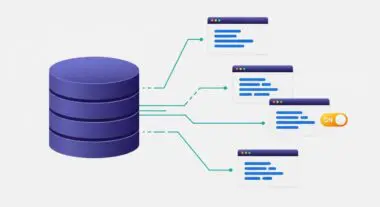

A data pipeline is an automated architecture designed to move data from disparate sources to a destination where it can be utilized for analytics, machine learning, or storage. It encompasses several stages, including ingestion, processing—such as cleaning, deduplication, and transformation—and loading. In modern AI architectures, these pipelines serve as the foundational infrastructure for Retrieval-Augmented Generation (RAG) systems, converting unstructured web content into vectorized embeddings stored in specialized databases.

Technically, a data pipeline can follow ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) methodologies. In the AI era, the focus has shifted toward real-time streaming pipelines that utilize tools like Apache Kafka or AWS Kinesis to ensure that the data feeding into Large Language Models (LLMs) is as current as possible. This continuous flow is essential for maintaining the relevance of AI agents and search engines that rely on dynamic data environments.

The Real-World Analogy

Think of a data pipeline as a high-speed automated bottling plant. Raw liquid (raw data) is sourced from various tanks (databases or APIs), filtered for impurities (data cleaning), poured into specific bottle shapes (standardized formats), labeled (metadata tagging), and finally packed into crates (data warehouses) ready for distribution to the consumer (the AI model). Without the plant, you have a chaotic mess of raw liquid that is impossible to serve or consume safely at scale.

Why is Data Pipeline Important for GEO and LLMs?

For Generative Engine Optimization (GEO), the efficiency of a data pipeline determines how quickly an LLM or a search engine’s index reflects new information. High-performance pipelines ensure that entities and their relationships are correctly mapped, which directly impacts source attribution in AI-generated responses. If a pipeline is latent or introduces noise, the AI may rely on stale or incorrect data, leading to lower rankings in systems like Perplexity or ChatGPT.

Furthermore, data pipelines are responsible for the chunking and vectorization of content. How a pipeline breaks down a long-form article into segments determines whether an AI agent can accurately retrieve the most relevant snippet to answer a user query. Proper implementation ensures that your brand’s technical authority is preserved and correctly indexed by the crawlers feeding these generative systems.

Best Practices & Implementation

- Implement Robust Data Validation: Use schema enforcement at the ingestion stage to prevent “garbage in, garbage out” scenarios that can degrade LLM performance.

- Optimize for Latency: Utilize stream processing for time-sensitive data to ensure that RAG systems have access to real-time information.

- Modular Architecture: Build decoupled pipeline stages to allow for independent scaling and easier troubleshooting of specific data transformations.

- Maintain Data Lineage: Document the flow of data from source to destination to ensure transparency and facilitate audits of AI training sets.

Common Mistakes to Avoid

One frequent error is failing to account for schema drift, where changes in source data formats break the downstream transformation logic, leading to data loss. Another common mistake is neglecting the cost of data egress and processing; over-engineered pipelines can lead to unsustainable infrastructure expenses in high-frequency RAG environments. Finally, many organizations ignore data deduplication, which results in redundant information being indexed, confusing AI models and diluting entity authority.

Conclusion

A well-architected data pipeline is the essential conduit for high-fidelity information, directly dictating the speed and accuracy with which AI search engines index and attribute content.