Executive Summary

- Web scraping facilitates the automated conversion of unstructured HTML data into structured formats for large-scale SEO analysis.

- Advanced implementations utilize headless browsers and proxy rotation to navigate complex JavaScript frameworks and anti-scraping protocols.

- Strategic SEO applications include SERP feature tracking, competitive price monitoring, and comprehensive backlink profile auditing.

What is Web Scraping?

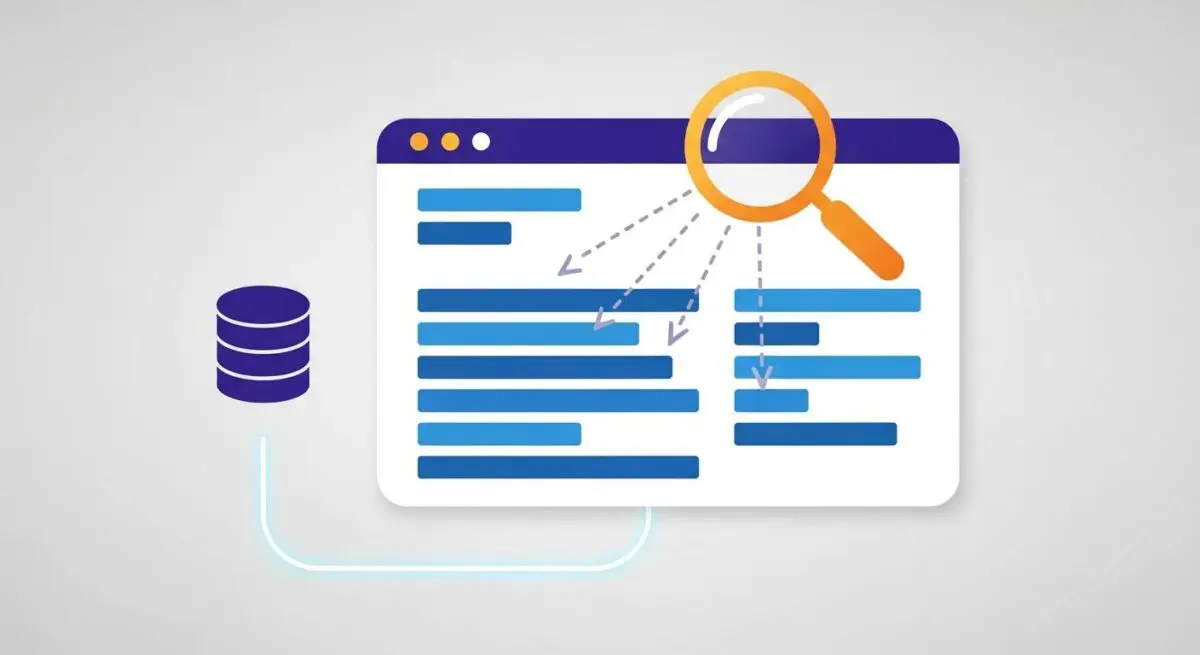

Web scraping, also known as web data extraction or web harvesting, is the automated process of retrieving specific data points from websites. Unlike manual data collection, web scraping employs software—often referred to as bots or crawlers—to send HTTP requests to a target server, download the HTML source code, and parse that code to extract predefined information. This data is then typically serialized into structured formats such as JSON, CSV, or SQL databases for further analysis.

Technically, the process involves navigating the Document Object Model (DOM) to locate specific elements via CSS selectors or XPath expressions. In modern web environments, scraping often requires the use of headless browsers like Puppeteer or Playwright to execute JavaScript and render dynamic content that is not present in the initial HTML response. We at Andres SEO Expert categorize web scraping as a foundational pillar for data-driven technical SEO and competitive intelligence.

The Real-World Analogy

Imagine a massive physical library where you need to find the price of every business book published in the last decade. Instead of hiring hundreds of people to manually flip through every page and write down numbers on napkins, you deploy a fleet of high-speed digital scanners. These scanners are programmed to ignore the covers, the prefaces, and the indexes, focusing solely on the ‘Price’ field on the back cover. Within minutes, the scanners compile a perfectly organized spreadsheet of every book and its price, allowing you to analyze market trends instantly without ever having to read a single sentence yourself.

Why is Web Scraping Important for SEO?

Web scraping provides the raw data necessary to make informed decisions in a highly competitive search landscape. By scraping Search Engine Results Pages (SERPs), SEO professionals can track keyword volatility, identify emerging competitors, and monitor the presence of rich snippets or local pack features. This level of granularity is essential for understanding how Google’s algorithms are interpreting specific intent categories.

Furthermore, scraping allows for deep competitive analysis. We use it to extract competitor site structures, internal linking patterns, and content metadata. This data reveals gaps in our own strategies and highlights opportunities for technical optimization. It also plays a critical role in backlink auditing, where scraping can verify the live status and rel attributes of thousands of inbound links across diverse domains, ensuring the integrity of a site’s authority profile.

Best Practices & Implementation

- Utilize Headless Browser Automation: For modern, client-side rendered applications (React, Vue, Angular), use headless browsers to ensure all DOM elements are fully loaded before extraction.

- Implement Intelligent Proxy Rotation: To avoid IP rate-limiting and blocks, employ a pool of residential or mobile proxies and rotate them frequently to mimic organic user behavior.

- Respect Robots.txt and Crawl Delays: Always check the target’s robots.txt file and implement asynchronous delays between requests to prevent degrading the target server’s performance.

- Use Resilient Selectors: Avoid brittle, absolute XPaths. Instead, use relative selectors or data attributes that are less likely to break when the website undergoes minor UI updates.

Common Mistakes to Avoid

One frequent error is failing to handle dynamic content or lazy-loaded images, resulting in incomplete datasets. Another critical mistake is ignoring the legal and ethical implications; scraping sensitive or copyrighted data without authorization can lead to legal repercussions and IP blacklisting. Finally, many developers fail to implement robust error handling, causing scripts to crash when encountering unexpected 404 pages or CAPTCHAs, which compromises data continuity.

Conclusion

Web scraping is an indispensable technical discipline that transforms the vast, unstructured web into actionable intelligence for SEO and business growth. When implemented with technical precision and ethical consideration, it provides a significant competitive advantage in search visibility and data-driven decision-making.