Executive Summary

- Combines the low-cost storage of data lakes with the high-performance management and ACID transactions of data warehouses.

- Enables schema enforcement and data versioning on unstructured data, critical for reliable AI model training and LLM fine-tuning.

- Facilitates real-time data streaming and programmatic SEO by unifying batch and stream processing into a single architectural layer.

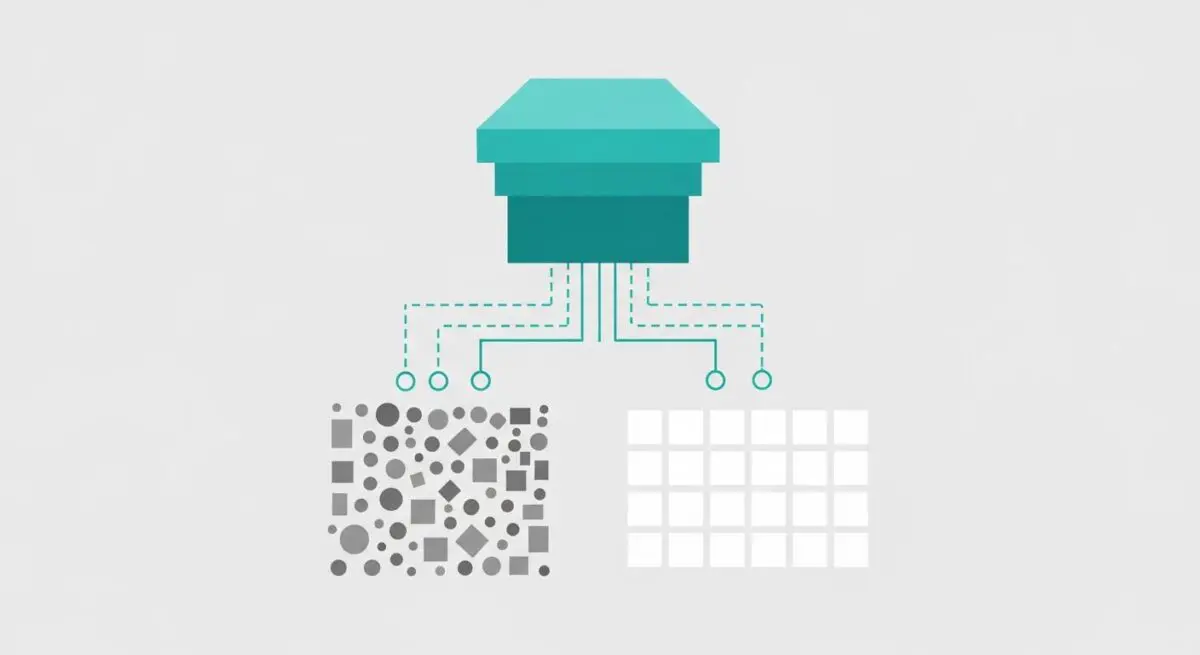

What is Data Lakehouse?

A Data Lakehouse is a modern data architectural paradigm that unifies the cost-effective, scalable storage of a data lake with the robust data management and transactional capabilities of a data warehouse. It implements a metadata layer on top of raw storage—such as Amazon S3, Azure Blob Storage, or Google Cloud Storage—to support ACID (Atomicity, Consistency, Isolation, Durability) transactions, schema enforcement, and data versioning. This allows organizations to store vast amounts of unstructured, semi-structured, and structured data while maintaining the high-performance query capabilities required for advanced analytics and machine learning.

In the context of AI Automations, a Data Lakehouse serves as the centralized repository for both historical logs and real-time event streams. By utilizing open table formats such as Delta Lake, Apache Iceberg, or Apache Hudi, it bridges the gap between data engineering and AI operations. This architecture eliminates data silos and reduces the latency between data ingestion and its availability for automated decision-making engines, Large Language Model (LLM) context injection, or complex data pipelines.

The Real-World Analogy

Imagine a massive, disorganized warehouse filled with thousands of unlabeled boxes; finding a specific item is slow and requires manual searching—this is a Data Lake. Conversely, a high-end boutique is perfectly organized but has limited space and is extremely expensive to stock—this is a Data Warehouse. A Data Lakehouse is like a smart fulfillment center: it has the massive, low-cost capacity of the warehouse, but every item is tagged with a digital tracker and managed by an automated robotic system. You gain the infinite space of the warehouse with the instant retrieval and precision of the boutique.

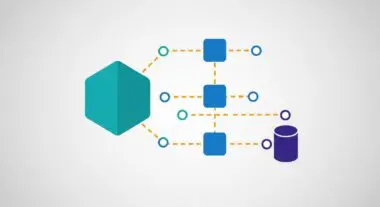

Why is Data Lakehouse Critical for Autonomous Workflows and AI Content Ops?

For AI Content Ops and autonomous workflows, data consistency is paramount. A Data Lakehouse ensures that the data used to trigger webhooks or populate programmatic SEO templates is accurate and up-to-date through ACID compliance. This prevents “dirty reads,” where an automation might pull incomplete or corrupted data during a concurrent write operation. Furthermore, the architecture supports “Time Travel” (data versioning), allowing developers to audit AI-generated content against the exact state of the database at the time of generation. This is essential for maintaining governance in serverless architectures where stateless functions require a reliable, high-speed data source to execute complex logic without the overhead of traditional relational database scaling limits.

Best Practices & Implementation

- Implement an Open Table Format: Utilize Delta Lake or Apache Iceberg to enable ACID transactions and schema evolution on your cloud storage layer.

- Decouple Storage and Compute: Ensure your architecture allows you to scale storage independently from query processing to optimize costs for large-scale SEO and AI data sets.

- Enforce Schema Validation: Use the metadata layer to reject malformed JSON payloads before they enter the lakehouse to maintain data integrity for downstream AI agents.

- Optimize for Partitioning: Structure your data partitions based on query patterns (e.g., by date or content category) to minimize data skipping and reduce API latency.

Common Mistakes to Avoid

One frequent error is treating the Lakehouse as a simple dump site without defining a clear metadata strategy, leading to “data swamp” conditions despite the advanced architecture. Another mistake is failing to implement proper fine-grained access controls at the metadata layer, which can expose sensitive API keys or proprietary content data to unauthorized automated processes during the ingestion phase.

Conclusion

The Data Lakehouse is the foundational infrastructure for scalable AI Automations, providing the reliability of a warehouse with the agility of a lake. It is the essential bridge for turning raw data into actionable intelligence for programmatic SEO and autonomous content operations.