Executive Summary

- Reduces Time to First Byte (TTFB) by serving pre-rendered HTML, bypassing the PHP interpreter and MySQL database queries.

- Enhances server scalability by significantly lowering CPU and RAM utilization during high-concurrency traffic events.

- Optimizes Core Web Vitals, specifically Largest Contentful Paint (LCP), by accelerating the delivery of the initial HTML document.

What is Page Caching?

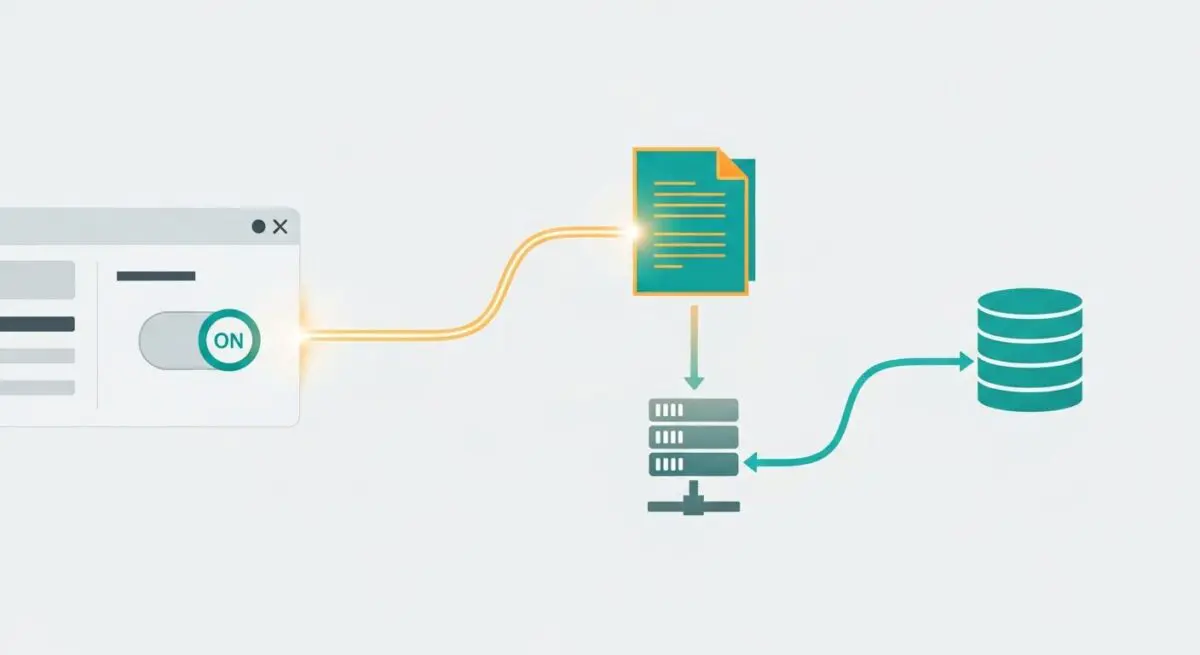

Page caching is a sophisticated architectural optimization within the WordPress ecosystem designed to mitigate the inherent latency of dynamic content generation. In a standard, non-cached environment, every request initiated by a browser triggers a complex sequence of events on the server. This sequence, often referred to as the WordPress bootstrap process, involves the initialization of the PHP engine, the loading of the WordPress core files, the execution of active plugins, and the parsing of the active theme’s template hierarchy. Throughout this lifecycle, numerous SQL queries are dispatched to the MySQL or MariaDB database to retrieve post data, metadata, and configuration settings. The server then assembles these components into a final HTML document to be sent back to the client.

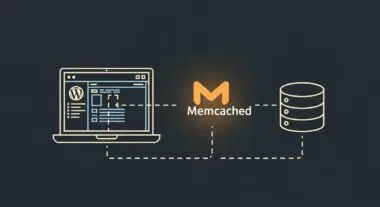

Page caching fundamentally alters this workflow by capturing the final HTML output of a successful request and storing it in a high-speed storage medium, such as the server’s local disk or, more ideally, its RAM. When subsequent requests for the same URI are received, the caching layer—whether it resides within WordPress via a plugin or at the server level via a reverse proxy—intercepts the request and serves the pre-rendered HTML file. This bypasses the entire PHP and database stack, reducing the server’s computational overhead from several hundred milliseconds of processing to a few milliseconds of file I/O. In enterprise environments, this is often managed through advanced tools like Nginx FastCGI Cache, Varnish Cache, or specialized object-caching integrations that ensure the delivery of content at wire speed.

The Real-World Analogy

To understand page caching, consider the difference between a custom-order tailor and a high-end ready-to-wear boutique. In a custom-order scenario (dynamic WordPress), every time a customer wants a suit, the tailor must take measurements, select the fabric, cut the pattern, and sew the garment from scratch. This process is time-consuming and limits the number of customers the tailor can serve in a day. Page caching is analogous to the tailor realizing that most customers want the same popular suit in a standard size. The tailor pre-makes fifty of these suits and displays them on a rack. When a customer arrives, they can simply take the suit and leave immediately. The tailor’s expertise (PHP/Database) was only required once to create the initial design and the first batch, leaving them free to handle only the truly unique, custom requests while the boutique serves the majority of the clientele instantly.

How Page Caching Impacts Server Performance & Speed Engineering?

The implementation of page caching is the most effective strategy for optimizing the Time to First Byte (TTFB), a critical metric in the Core Web Vitals framework. By serving static HTML, the server eliminates the “processing” delay, allowing the browser to receive the first byte of data almost immediately after the TCP handshake and TLS negotiation. This early delivery of the HTML document is vital because it contains the references to all other critical assets, such as CSS for the layout and JavaScript for interactivity. When the HTML is delivered faster, the browser’s preload scanner can begin fetching these resources earlier, effectively shifting the entire rendering timeline forward and improving the Largest Contentful Paint (LCP) score.

Beyond individual page speed, page caching is essential for server-side resource management and high-availability engineering. Every dynamic WordPress request consumes a PHP-FPM worker and a portion of the server’s CPU and RAM. On a high-traffic site, the number of concurrent requests can easily exceed the number of available worker processes, leading to a queue and eventually causing the server to drop connections (502/504 errors). Page caching allows the server to handle a significantly higher volume of concurrent traffic—often by several orders of magnitude—because serving a static file from memory requires negligible CPU cycles compared to executing the PHP stack. This scalability is particularly important for sites experiencing sudden traffic spikes from social media or news mentions, as it prevents the “slashdot effect” from taking the site offline.

Furthermore, page caching at the edge (Edge Caching) extends these benefits by storing the static HTML on a global network of servers (CDNs). This reduces the physical distance between the user and the content, further minimizing latency caused by the speed of light and network congestion. When combined with local server-side caching, edge caching creates a multi-layered defense that ensures maximum performance and uptime regardless of the user’s geographic location or the current server load.

Best Practices & Implementation

- Implement Server-Level Caching: For maximum efficiency, utilize Nginx FastCGI Cache or LiteSpeed Cache. These solutions operate at the web server level, meaning the request is satisfied before it ever reaches the PHP-FPM pool, resulting in significantly lower resource consumption than plugin-based solutions.

- Configure Intelligent Cache Purging: Use a caching plugin or server module that supports automatic cache invalidation. This ensures that when a post is updated, the specific cached version of that page is deleted and regenerated, maintaining content freshness without manual intervention.

- Utilize Cache-Control Headers: Properly configure HTTP headers such as Cache-Control and Expires. These headers instruct both intermediary caches and the user’s browser on how long to store the content, reducing the number of requests that need to reach your origin server.

- Bypass Cache for Authenticated Users: Ensure that your caching rules are configured to “Bypass on Cookie.” This prevents logged-in administrators or customers from seeing cached content and prevents their private data from being cached and served to anonymous visitors.

- Monitor Cache Hit Ratio: Regularly analyze your server logs or CDN analytics to determine your Cache Hit Ratio. A low ratio indicates that many requests are still hitting the dynamic backend, suggesting that your cache TTL may be too short or that query strings are unnecessarily fragmenting the cache.

Common Mistakes to Avoid

A frequent error in page caching implementation is the failure to account for dynamic elements such as user-specific greeting bars or shopping cart fragments. If these elements are not handled via client-side AJAX or Fragment Caching (ESI), the personalized data of one user may be captured in the cache and served to everyone else. Another common mistake is neglecting the impact of query strings; by default, many caching systems treat every unique URL parameter as a separate page, which can lead to cache busting and unnecessary server load if parameters like UTM codes are not explicitly ignored.

Conclusion

Page caching is an indispensable component of modern WordPress architecture, providing the foundation for high-speed delivery and server scalability. By strategically converting dynamic requests into static assets, developers can drastically improve user experience and maintain site stability under heavy load.