Executive Summary

- Edge caching reduces Time to First Byte (TTFB) by storing content on geographically distributed Point of Presence (PoP) servers.

- It minimizes origin server load and bandwidth consumption by offloading request fulfillment to the network edge.

- Advanced implementations utilize stale-while-revalidate and edge compute logic to balance data freshness with delivery speed.

What is Edge Caching?

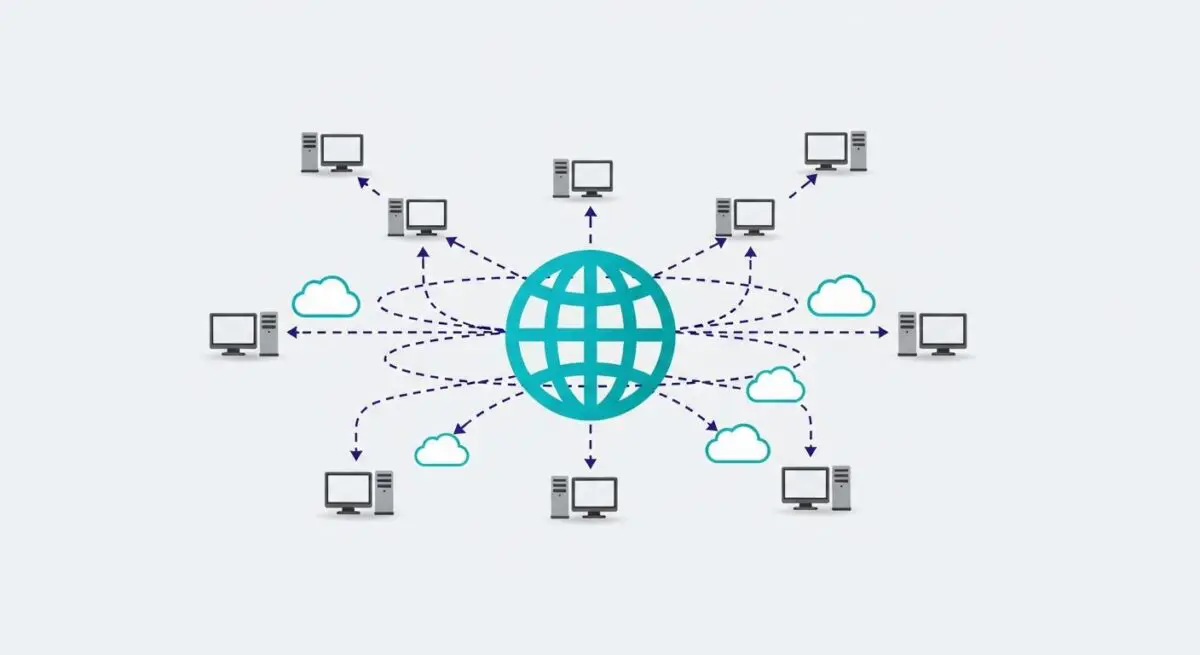

Edge caching is a high-performance web optimization technique that involves storing copies of website resources—such as HTML documents, JavaScript files, CSS, and images—on a distributed network of servers known as a Content Delivery Network (CDN). Unlike traditional browser caching, which occurs on the user’s local device, or server-side caching, which occurs at the origin, edge caching takes place at various Points of Presence (PoPs) located at the “edge” of the internet, geographically closer to the end-user.

When a user requests a resource, the request is intercepted by the nearest edge server. If the server holds a valid cached version of the content, it serves the request immediately, bypassing the need to communicate with the origin server. This process significantly reduces the physical distance data must travel, effectively mitigating network latency and reducing the number of hops across the global internet backbone.

The Real-World Analogy

Imagine a massive central library located in New York City that holds every book ever written. If a reader in Tokyo wants to borrow a book, they would normally have to wait days for it to be shipped across the ocean. Edge caching is equivalent to the library placing small “mini-branches” in every major city worldwide. These branches stock the most popular books locally. Now, the reader in Tokyo simply walks to their local neighborhood branch and picks up the book in minutes. The central library only gets involved if a specific, rare book isn’t available at the local branch.

Why is Edge Caching Critical for Website Performance and Speed Engineering?

Edge caching is a fundamental pillar of modern speed engineering because it directly impacts Time to First Byte (TTFB) and Largest Contentful Paint (LCP). By serving content from a nearby PoP, the Round Trip Time (RTT) for TCP and TLS handshakes is drastically reduced. This is especially critical for mobile users on high-latency networks where every millisecond of delay can lead to bounce rate increases.

Furthermore, edge caching provides a layer of “origin shielding.” By absorbing the vast majority of incoming traffic, it prevents the origin server from becoming a bottleneck during traffic spikes. This ensures that the server remains responsive for non-cacheable, dynamic requests (like database queries or personalized user sessions), maintaining a stable Interaction to Next Paint (INP) and overall site reliability.

Best Practices & Implementation

- Optimize Cache-Control Headers: Utilize precise s-maxage directives to instruct edge servers on how long to retain content independently of the user’s browser cache.

- Implement Stale-While-Revalidate: Use this directive to allow the edge server to serve a slightly outdated resource while simultaneously fetching a fresh version in the background, ensuring zero-latency updates.

- Leverage Cache Tags and Instant Purging: Instead of relying on long TTLs, use granular cache tagging to purge specific groups of pages or assets instantly when content is updated in the CMS.

- Edge Logic and Personalization: Use Edge Workers or Functions to execute logic at the edge, allowing for the caching of semi-dynamic content by modifying headers or HTML fragments before delivery.

Common Mistakes to Avoid

One frequent error is failing to distinguish between private and public data, leading to the accidental caching of sensitive user information on public edge servers. Another common mistake is setting excessively long Time-to-Live (TTL) values without a robust purging mechanism, resulting in users seeing outdated content. Finally, many developers overlook the “Cache Hit Ratio,” failing to analyze why requests are bypassing the edge and hitting the origin unnecessarily.

Conclusion

Edge caching is an essential architectural requirement for reducing global latency and optimizing Core Web Vitals. By strategically distributing content closer to the user, brands can achieve near-instantaneous load times and superior server resilience.