Executive Summary

- Vector embeddings convert discrete data into high-dimensional numerical vectors, capturing semantic relationships beyond literal keyword matching.

- They serve as the foundational architecture for Retrieval-Augmented Generation (RAG), allowing LLMs to access relevant external knowledge.

- Optimizing for vector space proximity is a core pillar of Generative Engine Optimization (GEO) to ensure content visibility in AI-driven results.

What is Vector Embedding?

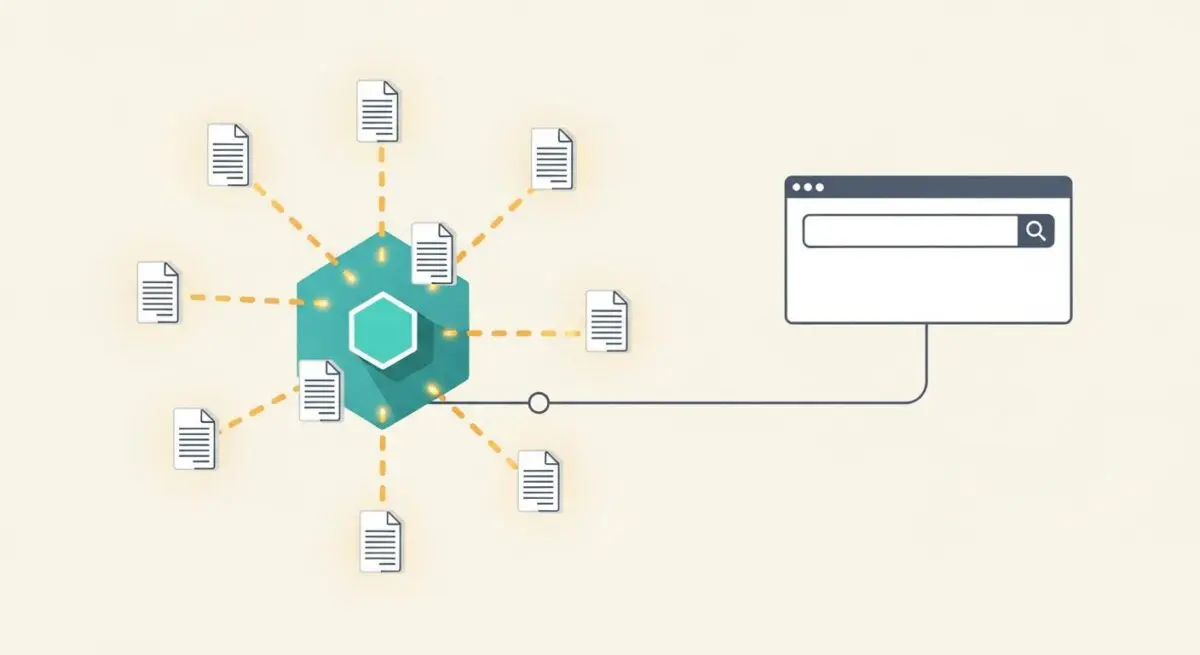

Vector embedding is a sophisticated data representation technique where words, sentences, or entire documents are mapped to high-dimensional numerical vectors. Unlike traditional one-hot encoding or keyword-based indexing, embeddings place semantically similar items in close proximity within a continuous vector space. This mathematical transformation allows machines to process the meaning of data by calculating the distance between vectors, typically using metrics such as cosine similarity or Euclidean distance.

In the context of Large Language Models (LLMs) and modern search architectures, these embeddings are generated by neural networks that have been trained on massive datasets. By representing text as a dense array of floating-point numbers, the system can identify nuances, synonyms, and contextual relationships that are invisible to standard lexical search algorithms. This capability is what enables semantic search, where a query for “canine nutrition” can accurately retrieve results for “dog food” without an exact keyword match.

The Real-World Analogy

Imagine a massive, three-dimensional warehouse where every product is stored not by its SKU number, but by its characteristics. Instead of an “Aisle 5” for “Electronics,” products are suspended in space. A smartphone might be positioned near a laptop because they share “computing” traits, but also near a camera because they share “photography” traits. If you ask for something “to take pictures on the go,” the system does not look for those specific words; it simply finds the point in the warehouse where “portable” and “photography” intersect and identifies the closest objects. Vector embedding is the mathematical GPS that determines exactly where each piece of information sits in that multidimensional warehouse.

Why is Vector Embedding Important for GEO and LLMs?

For Generative Engine Optimization (GEO), vector embeddings are the primary mechanism through which AI models like Perplexity, Gemini, and ChatGPT determine relevance. When a user submits a prompt, the engine converts that prompt into a vector and searches its internal index or a vector database for the most semantically relevant content. If your content’s vector representation does not align closely with the user’s intent-vector, it will not be retrieved for the LLM’s context window, effectively making it invisible to the AI.

Furthermore, vector embeddings facilitate entity authority. By consistently producing content that occupies a specific “semantic neighborhood,” a brand can establish itself as a topical authority. This proximity influences source attribution; LLMs are more likely to cite and link to content that resides in the same high-dimensional space as the core concepts of the generated answer.

Best Practices & Implementation

- Enhance Semantic Density: Focus on topical depth rather than keyword frequency. Use related terminology and concepts that reinforce the primary subject matter to create a stronger vector signal.

- Implement Structured Data: Use Schema.org markup to explicitly define entities and their relationships, providing a map that helps embedding models categorize your content accurately.

- Optimize for Natural Language Intent: Structure content to answer complex, multi-part questions. Since embeddings capture context, content that mirrors the conversational structure of AI prompts performs better.

- Maintain Topical Consistency: Ensure that your site’s internal linking and content clusters are semantically aligned to prevent vector drift, which can dilute your authority in a specific niche.

Common Mistakes to Avoid

A frequent error is keyword stuffing in an attempt to manipulate AI search. This often backfires because it disrupts the natural semantic flow, resulting in a vector that is incoherent or noisy, which AI models may deprioritize. Another mistake is failing to update legacy content; older articles may lack the contextual depth required by modern embedding models, causing them to lose visibility to newer, more semantically rich competitors.

Conclusion

Vector embeddings are the fundamental bridge between human language and machine computation. Mastering their role in semantic retrieval is essential for any GEO strategy aiming for long-term AI search visibility.