Executive Summary

- Semantic retrieval replaces legacy keyword matching with vector-based similarity to identify content based on conceptual intent.

- It is the foundational mechanism for Retrieval-Augmented Generation (RAG), directly influencing source attribution in AI search engines.

- Optimization for semantic retrieval requires a transition from lexical density to entity-based content architecture and high-dimensional semantic depth.

What is Semantic Retrieval?

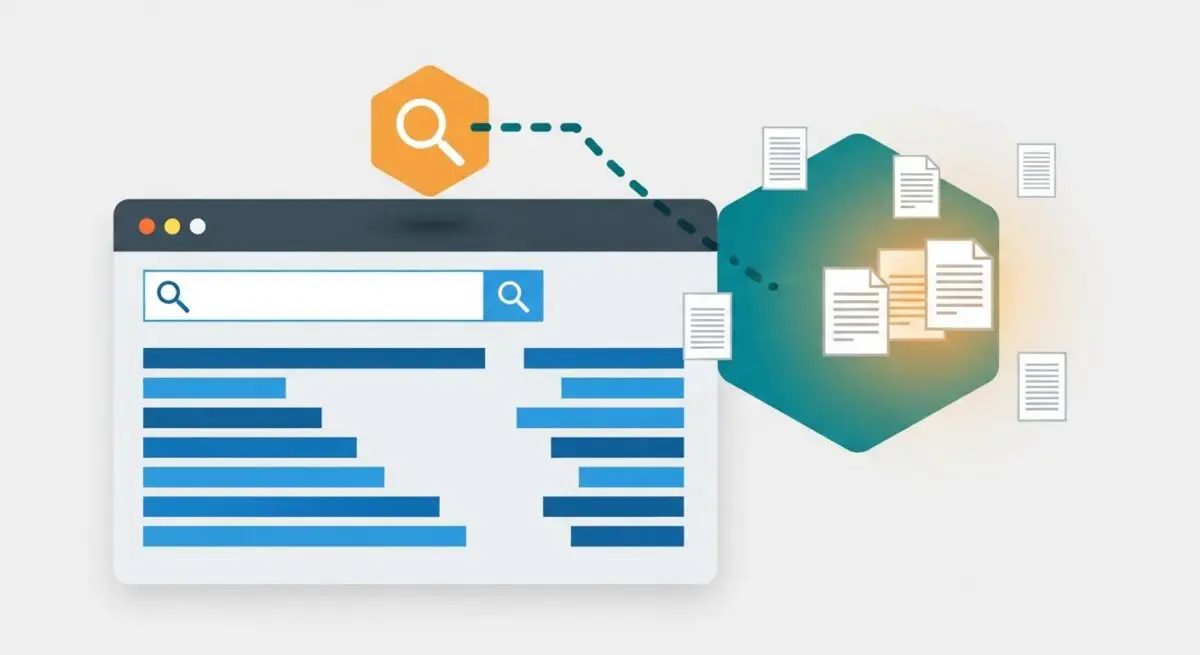

Semantic retrieval is a sophisticated information retrieval paradigm that identifies relevant data based on the conceptual meaning and intent of a query rather than literal keyword matching. Unlike traditional lexical search, which relies on algorithms like BM25 to calculate word frequencies and inverse document frequency, semantic retrieval utilizes high-dimensional vector embeddings generated by large language models (LLMs). These embeddings represent text as numerical coordinates in a multi-dimensional vector space, where semantically related concepts are positioned in close proximity regardless of their specific phrasing.

In a technical workflow, a user query is transformed into a dense vector using an embedding model. The retrieval engine then performs a similarity search—typically utilizing cosine similarity or K-Nearest Neighbors (KNN) algorithms—against a vector database containing indexed content. This allows the system to surface documents that address the user’s underlying information need, effectively bridging the gap between different terminologies that share the same semantic core.

The Real-World Analogy

Imagine walking into a massive, world-class kitchen looking for “something to flip a pancake.” In a traditional lexical search, you would only find tools specifically labeled “pancake flipper.” If a tool was labeled “offset spatula” or “flexible turner,” you would miss it entirely because the words do not match. Semantic retrieval, however, understands the intent of your request. It recognizes the physical properties and function required for the task and directs you to the spatulas, turners, and flat-edged blades, because it understands the concept of “flipping” in a culinary context, even if the word “pancake” is nowhere on the label.

Why is Semantic Retrieval Important for GEO and LLMs?

Semantic retrieval is the critical component of Retrieval-Augmented Generation (RAG), which powers modern AI search engines such as Perplexity, ChatGPT Search, and Google’s AI Overviews. For Generative Engine Optimization (GEO), understanding this shift is vital because AI models do not “rank” pages in the traditional sense; they “retrieve” context to synthesize an answer. If your content lacks semantic depth or fails to establish clear entity relationships, it will not be mapped near the user’s query in the vector space, leading to a total loss of visibility in AI-generated responses.

Furthermore, semantic retrieval prioritizes authoritative entity connections. By accurately mapping how your content relates to established industry concepts and nodes, AI engines can more reliably attribute information to your brand. This increases the probability of your site being cited as a primary source, which is the primary goal of visibility in the AI search era.

Best Practices & Implementation

- Optimize for Entity Salience: Ensure that primary entities and their attributes are clearly defined using natural language and supported by Schema.org structured data to reinforce semantic clarity for embedding models.

- Develop Comprehensive Topic Clusters: Build deep content silos that cover the full breadth of a subject to increase the density of related vector embeddings, making your domain a “semantic authority” for that topic.

- Prioritize Natural Language Processing (NLP) Patterns: Write in a direct, declarative style that mirrors how LLMs process information, avoiding ambiguous metaphors that might obscure the core semantic meaning during vectorization.

- Enhance Contextual Relevance: Use descriptive subheadings and introductory summaries that provide clear contextual signals to embedding models during the indexing phase of the RAG pipeline.

Common Mistakes to Avoid

A frequent error is continuing to rely on legacy keyword stuffing, which creates “noise” in the vector space and can distance your content from the intended semantic cluster. Another mistake is producing “thin” content that lacks the conceptual depth required for an LLM to generate a high-confidence embedding. Finally, many organizations neglect structured data, forcing the AI to infer relationships between entities rather than providing an explicit semantic map that guarantees accuracy.

Conclusion

Semantic retrieval represents a fundamental shift from matching strings to understanding things, requiring GEO professionals to focus on conceptual depth and entity authority to maintain visibility in AI-driven search ecosystems.