Executive Summary

- Centralizes version-controlled LLM instructions to ensure output consistency across multi-agent systems.

- Decouples prompt logic from core application code, facilitating rapid iteration and A/B testing without redeployment.

- Optimizes API payload efficiency by standardizing system-level instructions for stateless automation workflows.

What is Prompt Library?

A prompt library is a centralized, version-controlled repository of optimized text instructions designed to guide Large Language Models (LLMs) in generating specific outputs. In the architecture of AI automations, a prompt library functions as a modular layer that decouples the linguistic logic (the prompt) from the execution logic (the code or workflow). This allows engineers to manage, iterate, and deploy updates to AI behavior across multiple autonomous agents or API endpoints simultaneously without altering the underlying software infrastructure.

Technically, a prompt library often utilizes templating engines to inject dynamic variables into static instruction sets. By treating prompts as managed assets rather than hardcoded strings, organizations can ensure high-fidelity outputs, maintain rigorous security standards (such as preventing prompt injection), and optimize token consumption across complex data pipelines.

The Real-World Analogy

Imagine a global franchise like a high-end coffee chain. Instead of allowing every individual barista to decide how to make a latte, the company maintains a centralized “Recipe Master Manual” at headquarters. When the head office decides to update the recipe to use a different milk-to-espresso ratio, they update the manual once, and every store worldwide immediately adopts the new standard. A prompt library is that master manual for your AI agents, ensuring that whether you are running one workflow or ten thousand, the “recipe” for the output remains consistent and high-quality.

Why is Prompt Library Critical for Autonomous Workflows and AI Content Ops?

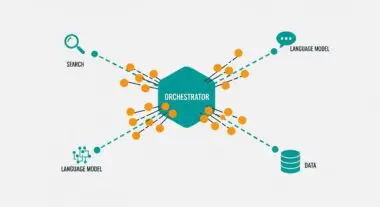

In autonomous workflows, maintaining state and consistency across disparate API calls is a primary challenge. A prompt library enables stateless automation by providing a standardized framework for JSON payloads, ensuring that every request sent to an LLM adheres to the same structural and contextual constraints. This is particularly vital for programmatic SEO and large-scale content operations where thousands of pages are generated; any minor drift in prompt quality can lead to massive data corruption or brand inconsistency.

Furthermore, a prompt library enhances API payload efficiency. By centralizing system-level instructions, developers can more easily manage the context window, reducing unnecessary token overhead and lowering operational costs. It also facilitates sophisticated multi-agent orchestration, where different agents can pull specific, specialized prompts from the library to complete sub-tasks within a larger data pipeline.

Best Practices & Implementation

- Implement Semantic Versioning: Treat prompts like software releases. Use versioning (e.g., v1.0.2) to track changes and allow for easy rollbacks if a new prompt iteration degrades output quality.

- Utilize Dynamic Templating: Use engines like Jinja2 or Liquid to map workflow variables into prompts. This allows for the programmatic injection of real-time data while keeping the core instruction set immutable.

- Centralize Metadata and Analytics: Store performance metrics (token cost, latency, and accuracy scores) alongside each prompt version to identify which instructions yield the highest ROI.

- Establish a Prompt Evaluation Sandbox: Before pushing a prompt to the production library, run it through an automated testing suite to ensure it handles edge cases and adheres to safety protocols.

Common Mistakes to Avoid

The most frequent error is hardcoding prompts directly into application scripts or Zapier/Make modules, which makes global updates impossible and creates technical debt. Another common pitfall is neglecting context window management, where prompts are stored without regard for the token limits of the target model, leading to truncated outputs or increased costs. Finally, many organizations fail to document the intent behind specific prompt iterations, making it difficult for new team members to understand the logic behind complex instruction sets.

Conclusion

A prompt library is the foundational infrastructure required for scalable, professional-grade AI automations, providing the governance and modularity necessary for high-performance content operations.