Executive Summary

- Dynamic allocation of computational resources based on prompt complexity and intent.

- Optimization of API expenditure and latency through multi-model orchestration layers.

- Implementation of high-availability failover mechanisms for mission-critical AI pipelines.

What is Model Routing?

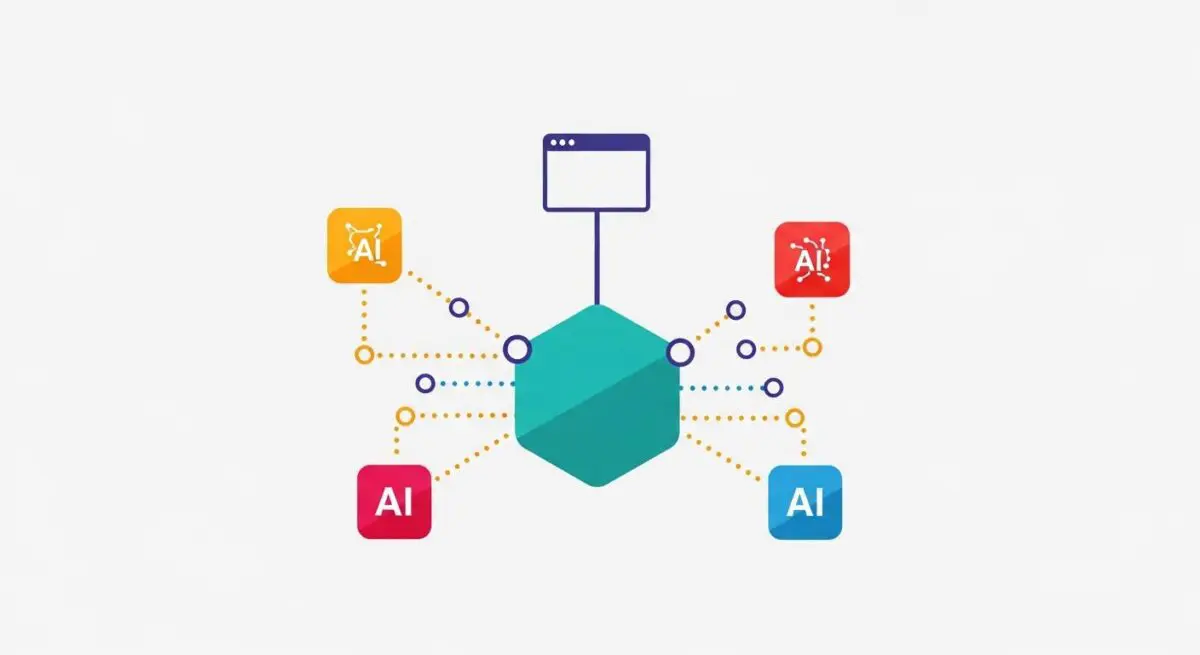

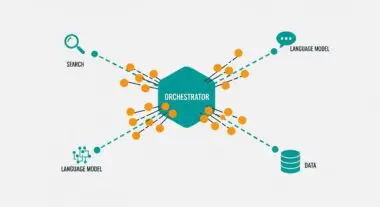

Model routing is a sophisticated architectural pattern in AI engineering where an intermediary logic layer—the router—evaluates incoming prompts or data payloads to determine the most efficient Large Language Model (LLM) for execution. Instead of directing every request to a single high-parameter model, the router analyzes factors such as task complexity, required context window, and semantic intent to dispatch the request to a specific model within a tiered ecosystem.

Technically, model routing functions as a middleware service that balances the trade-offs between inference cost, latency, and output quality. By utilizing smaller, specialized models for routine tasks (like classification or data extraction) and reserving frontier models for complex reasoning, organizations can maintain high performance while significantly reducing token consumption and operational overhead.

The Real-World Analogy

Consider a large-scale logistics company managing a diverse fleet of vehicles. If a customer needs a single envelope delivered across town, the dispatcher sends a bicycle courier rather than a semi-truck. The semi-truck is reserved for heavy, long-haul freight where its capacity is actually required. In this scenario, the dispatcher is the Model Router, ensuring that the most cost-effective and agile resource is used for simple tasks, while the most powerful resource is preserved for high-complexity requirements.

Why is Model Routing Critical for Autonomous Workflows and AI Content Ops?

In the context of autonomous workflows and programmatic SEO, model routing is the primary driver of economic scalability. Without routing, high-volume operations often become financially unsustainable as they default to the most expensive API endpoints for every micro-task. Routing allows for stateless automation to scale horizontally by offloading low-logic tasks to local or open-source models, thereby preserving API rate limits on frontier models for final-stage synthesis.

Furthermore, model routing enhances system resilience. By implementing a routing layer, developers can build redundant failover paths. If a specific provider experiences an outage or high latency, the router can dynamically redirect the payload to an equivalent model from a different provider, ensuring that AI content pipelines and agentic workflows remain operational without manual intervention.

Best Practices & Implementation

- Semantic Intent Classification: Use a lightweight embedding model or a high-speed classifier to categorize prompts before routing to ensure the target model matches the task difficulty.

- Tiered Model Architecture: Organize your model library into tiers (e.g., Tier 1 for reasoning, Tier 2 for transformation, Tier 3 for extraction) to simplify routing logic.

- Latency-Based Load Balancing: Implement logic that monitors the response times of various endpoints and routes traffic away from degraded services in real-time.

- Cost-Threshold Gating: Set strict token-spend limits at the router level to prevent recursive loops or inefficient prompts from exhausting budgets on expensive models.

- Continuous Evaluation: Regularly A/B test the router’s decisions to ensure that the “cheaper” model routes are not resulting in a significant degradation of output quality.

Common Mistakes to Avoid

One frequent error is over-engineering the router itself; if the routing logic takes longer to execute than the actual inference, the latency benefits are neutralized. Another common mistake is failing to account for context window parity, where a router sends a large payload to a model that cannot support the required number of tokens. Finally, many brands neglect to implement fallback logic, leaving the entire automation vulnerable if the primary routed model returns a 429 or 500 error.

Conclusion

Model routing is an essential component for any enterprise-grade AI infrastructure, providing the necessary balance between computational performance and fiscal responsibility. By treating LLMs as interchangeable commodities within a routed network, organizations can build more resilient, faster, and cost-effective autonomous systems.