Executive Summary

- Centralized management of multi-model workflows and agentic logic for complex task execution.

- Optimization of token consumption and latency through intelligent model routing and semantic caching.

- Enables scalable, stateless automation for programmatic SEO and enterprise AI content operations.

What is AI Orchestration?

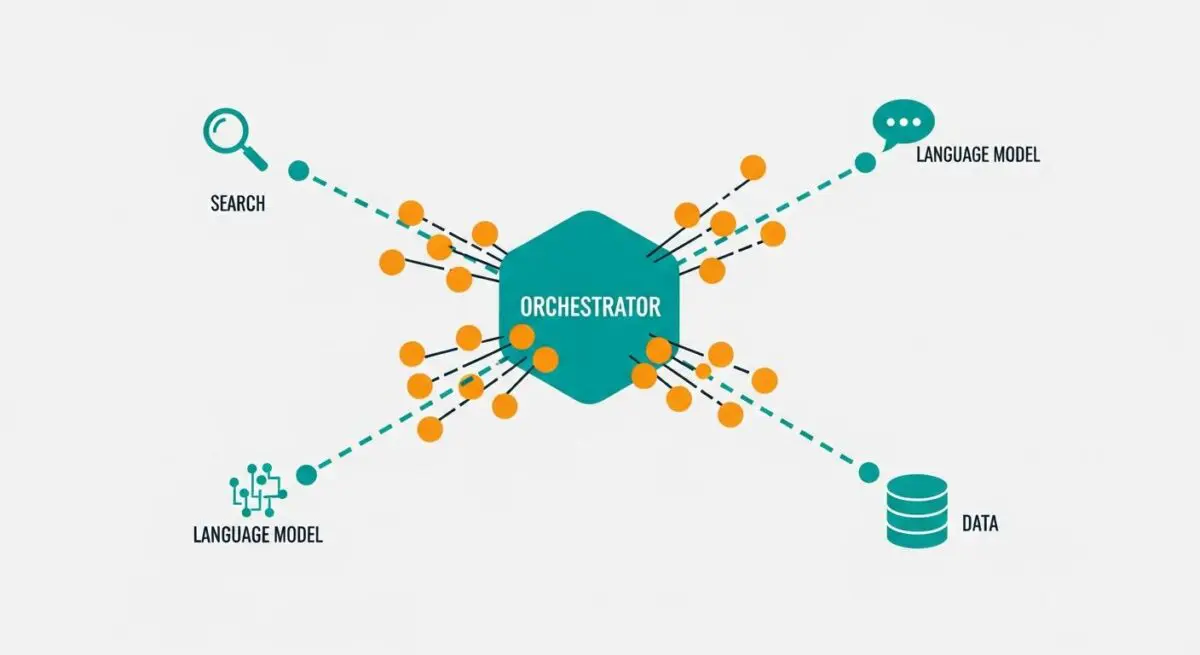

AI Orchestration is the systematic coordination of multiple artificial intelligence models, data sources, and software tools to execute complex, multi-step workflows. Unlike simple prompt-response interactions, orchestration involves managing the logic, state, and data flow between disparate components—such as Large Language Models (LLMs), vector databases, and third-party APIs—to achieve a specific objective autonomously.

At its core, an orchestration layer acts as the brain of an AI system, determining which model to invoke, how to format the input based on previous outputs, and how to handle errors or edge cases. This is essential for building agentic workflows where the system must reason through a task, decompose it into sub-tasks, and execute them in a specific sequence or parallel architecture.

The Real-World Analogy

Imagine a high-end restaurant kitchen. The AI models are the specialized chefs—one for pastry, one for grilling, one for sauces. However, without a Head Chef (the Orchestrator), the kitchen is chaotic. The Head Chef doesn’t cook every dish; instead, they receive the order, tell the sauce chef when to start, ensure the pastry chef has the ingredients from the pantry (data sources), and verify that the final plate is assembled correctly before it reaches the customer. AI Orchestration is that Head Chef, ensuring every specialized part of the system works in harmony to deliver a complete result.

Why is AI Orchestration Critical for Autonomous Workflows and AI Content Ops?

In the context of AI Content Ops and programmatic SEO, AI Orchestration is the difference between a static template and a dynamic, self-improving system. It enables stateless automation, where complex data payloads are processed across multiple serverless functions without losing context. By managing the “state” of a conversation or a data pipeline, orchestration allows for the execution of sophisticated tasks like automated content auditing, multi-source data synthesis, and real-time GEO (Generative Engine Optimization) adjustments.

Furthermore, orchestration optimizes API payload efficiency. By routing simpler tasks to smaller, cheaper models and reserving complex reasoning for frontier models, architects can scale production while maintaining strict control over token budgets and latency. This granular control is vital for enterprise-grade AI infrastructure where reliability and cost-effectiveness are non-negotiable.

Best Practices & Implementation

- Implement Model Agnosticism: Design your orchestration layer to be provider-agnostic, allowing you to swap LLM providers via API wrappers to avoid vendor lock-in and leverage the best model for specific sub-tasks.

- Utilize Semantic Caching: Integrate a caching layer to store and retrieve previously processed embeddings, significantly reducing redundant API calls and lowering operational costs.

- Enforce Strict Schema Validation: Use JSON Schema or Pydantic models to validate the output of every step in the chain, ensuring that downstream processes receive predictable, structured data.

- Establish Observability Hooks: Integrate logging and monitoring at every node of the orchestration flow to track latency, token consumption, and success rates for debugging complex agentic loops.

Common Mistakes to Avoid

One frequent error is over-engineering simple tasks; using a multi-agent orchestration framework for a task that a single prompt or a basic script could solve leads to unnecessary latency and cost. Another critical mistake is ignoring rate limits and concurrency; without a robust queuing system within the orchestrator, high-volume workflows will inevitably trigger 429 errors, causing systemic failure in autonomous pipelines.

Conclusion

AI Orchestration is the foundational architecture required to transition from experimental AI scripts to scalable, autonomous enterprise solutions. By effectively managing model interactions and data flows, organizations can build resilient, cost-effective AI operations.