Executive Summary

- Enables systematic tracking of prompt iterations to prevent regression in LLM output quality.

- Facilitates A/B testing and rollback capabilities within production-grade AI content pipelines.

- Decouples prompt logic from application code, allowing for independent deployment and optimization cycles.

What is Version Control for Prompts?

Version Control for Prompts is the systematic practice of managing, tracking, and auditing iterations of instructions provided to Large Language Models (LLMs). Much like traditional software versioning (e.g., Git), this discipline ensures that every modification to a prompt is documented, reversible, and testable. In the context of AI Automations, it involves treating prompts as first-class code assets rather than static strings, allowing developers to maintain a history of changes and their corresponding performance metrics.

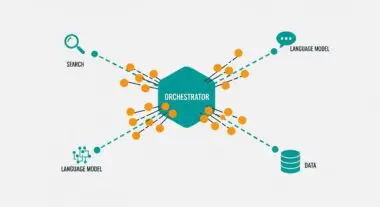

At its core, this concept addresses the inherent stochasticity of LLMs. By implementing versioning, engineering teams can ensure that an automated workflow remains stable even as underlying models are updated or prompt logic is refined. This includes tracking not only the text of the prompt but also the associated hyperparameters, such as temperature, top_p, and the specific model version, ensuring full environmental reproducibility across the automation stack.

The Real-World Analogy

Imagine a high-end restaurant that uses a digital recipe book. Every time the head chef decides to slightly adjust the amount of salt or the cooking temperature for a signature dish, the system saves a new version of that recipe. If customers suddenly start complaining that the dish is too salty, the chef does not have to guess what changed; they can simply look at the version history and revert to the exact recipe used the previous week. Version Control for Prompts provides this same safety net for AI, allowing businesses to roll back to a previous version of an AI’s instructions if a new update causes the automation to produce lower-quality results.

Why is Version Control for Prompts Critical for Autonomous Workflows and AI Content Ops?

In autonomous workflows, prompts act as the logic layer that directs data processing and content generation. Without version control, these workflows are susceptible to prompt drift, where minor changes lead to cascading failures in downstream JSON parsing or API integrations. For programmatic SEO and AI content operations, versioning is essential for maintaining brand voice and structural integrity across thousands of generated pages. It allows for stateless automation where the prompt logic is decoupled from the execution engine, enabling seamless scaling and the ability to run parallel A/B tests to determine which prompt version yields the highest conversion or engagement rates.

Best Practices & Implementation

- Decouple Prompts from Code: Store prompts in external configuration files or a dedicated Prompt Management System (PMS) rather than hardcoding them within application logic to allow for independent updates.

- Use Semantic Versioning: Apply a versioning schema (e.g., v1.0.2) to every prompt iteration, ensuring that API calls can specifically request a pinned version to maintain output consistency.

- Implement Automated Evals: Integrate evaluation frameworks that automatically test new prompt versions against a gold-standard dataset before they are deployed to production environments.

- Log Metadata and Hyperparameters: Always store the model name, temperature, and max tokens alongside the prompt text, as these variables significantly influence the final output.

Common Mistakes to Avoid

One frequent error is failing to synchronize prompt versions with model updates; a prompt optimized for GPT-3.5 may fail when applied to GPT-4 without recalibration. Another common mistake is the lack of a rollback strategy, leaving teams unable to quickly restore service when a refined prompt produces unexpected hallucinations or formatting errors in a live production environment.

Conclusion

Version Control for Prompts is a fundamental requirement for building resilient, scalable AI automation architectures that require high-fidelity outputs and rigorous audit trails.