Executive Summary

- Webhook integration facilitates event-driven communication between disparate software systems via HTTP POST requests, enabling real-time data synchronization.

- In the context of AI and RAG systems, webhooks serve as the primary mechanism for updating vector databases and triggering agentic workflows upon data changes.

- Optimizing webhook architecture is essential for Generative Engine Optimization (GEO) to ensure LLMs access the most current and authoritative data sources.

What is Webhook Integration?

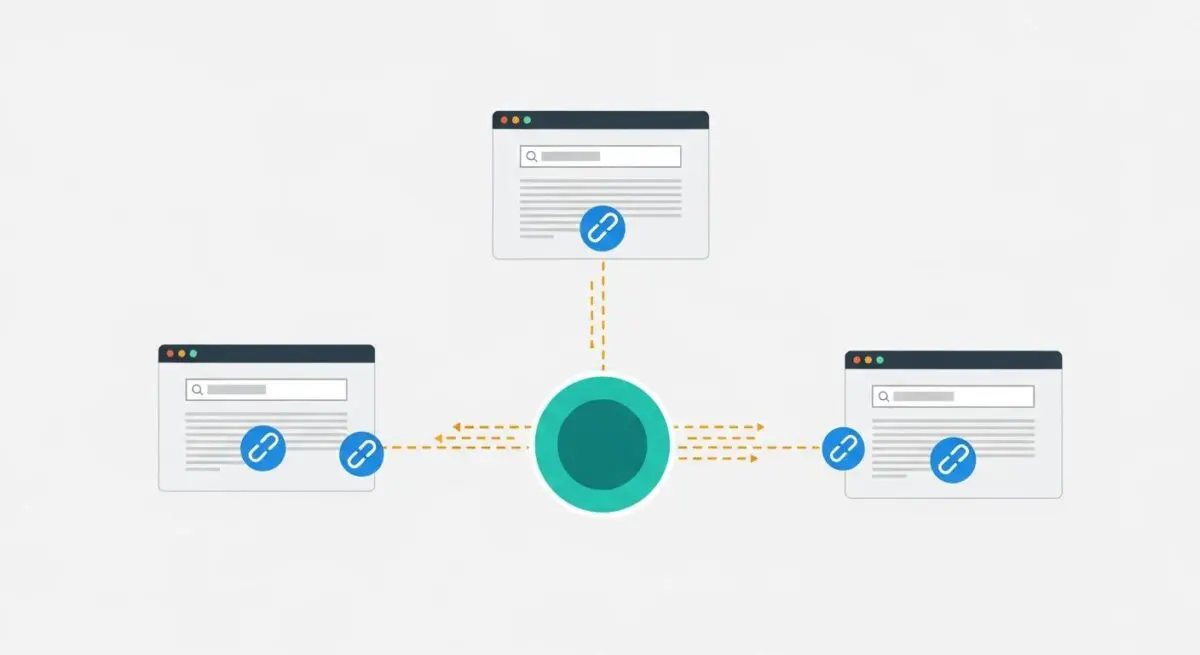

Webhook integration is a method of augmenting or altering the behavior of a web application through custom callbacks. Technically, a webhook is an HTTP POST request triggered by a specific event in a source system, which delivers a data payload to a destination URL. Unlike traditional APIs that require polling—where a client frequently requests data to check for updates—webhooks operate on an ‘inverted’ communication model. This event-driven architecture ensures that data is pushed immediately as it becomes available, minimizing latency and reducing unnecessary server overhead.

In the landscape of Artificial Intelligence and Large Language Models (LLMs), webhook integration acts as the connective tissue between static models and dynamic data environments. We at Andres SEO Expert utilize webhooks to bridge the gap between content management systems (CMS) and Retrieval-Augmented Generation (RAG) pipelines. By integrating webhooks, an AI system can receive instantaneous updates regarding content modifications, user interactions, or external market shifts, allowing the underlying knowledge base to remain synchronized with the ground truth in real-time.

The Real-World Analogy

Imagine you are waiting for an important package to arrive at your home. In a traditional API polling scenario, you would have to walk to your front door every ten minutes to check if the package is on the porch, wasting time and energy regardless of whether the package has arrived. Webhook integration is equivalent to having a smart doorbell: you stay inside and focus on your work, and the moment the delivery person presses the button, a notification is sent directly to your phone. You only act when an event has actually occurred, ensuring maximum efficiency and immediate awareness.

Why is Webhook Integration Important for GEO and LLMs?

For Generative Engine Optimization (GEO), the freshness and accuracy of data are paramount. Search engines and AI agents like Perplexity or ChatGPT rely on indexed data to provide citations and answers. Webhook integration ensures that when a brand updates its technical documentation or product specifications, those changes are immediately pushed to the discovery layers of AI search engines. This reduces the ‘knowledge gap’—the time between a real-world update and its reflection in LLM outputs.

Furthermore, webhooks are critical for AI Agent autonomy. When an agent is tasked with monitoring a specific dataset, webhooks allow the agent to remain dormant until a relevant trigger occurs, preserving computational resources. This event-driven approach enhances Source Attribution; by providing the most recent data through a webhook-triggered update, a brand increases its likelihood of being cited as the primary, authoritative source by generative engines that prioritize recency and precision.

Best Practices & Implementation

- Implement Robust Security Protocols: Use HMAC (Hash-based Message Authentication Code) signatures to verify that incoming webhook payloads originate from a trusted source and have not been tampered with during transit.

- Ensure Idempotency: Design your receiving endpoint to handle the same webhook notification multiple times without unintended side effects, protecting against network retries or duplicate transmissions.

- Optimize Payload Structure: Transmit only the necessary data fields or a unique identifier to minimize bandwidth and processing time, allowing the receiver to fetch additional details via a standard API call if required.

- Manage Rate Limiting and Concurrency: Implement a queuing system (such as RabbitMQ or Amazon SQS) to ingest webhook data, preventing your server from being overwhelmed during high-volume event bursts.

Common Mistakes to Avoid

One frequent error is the failure to implement proper error handling and retry logic; if the receiving server is down and the source does not attempt a redelivery, critical data synchronization is lost. Another common mistake is using webhooks for large-scale data transfers; webhooks are designed for lightweight notifications, and attempting to send massive datasets via a single POST request can lead to timeouts and 5xx errors. Finally, many organizations neglect to secure their webhook endpoints, leaving them vulnerable to ‘replay attacks’ where malicious actors resend captured payloads to manipulate system state.

Conclusion

Webhook integration is the foundational mechanism for real-time data fluidity, essential for maintaining the accuracy and authority required for high-performance AI Search and agentic workflows.