Executive Summary

- Centralizes heterogeneous data streams into a unified, schema-optimized environment for advanced analytical processing.

- Enables the decoupling of operational databases from analytical workloads, preserving performance during intensive AI-driven data extraction.

- Provides the foundational infrastructure for Retrieval-Augmented Generation (RAG) and large-scale programmatic SEO initiatives.

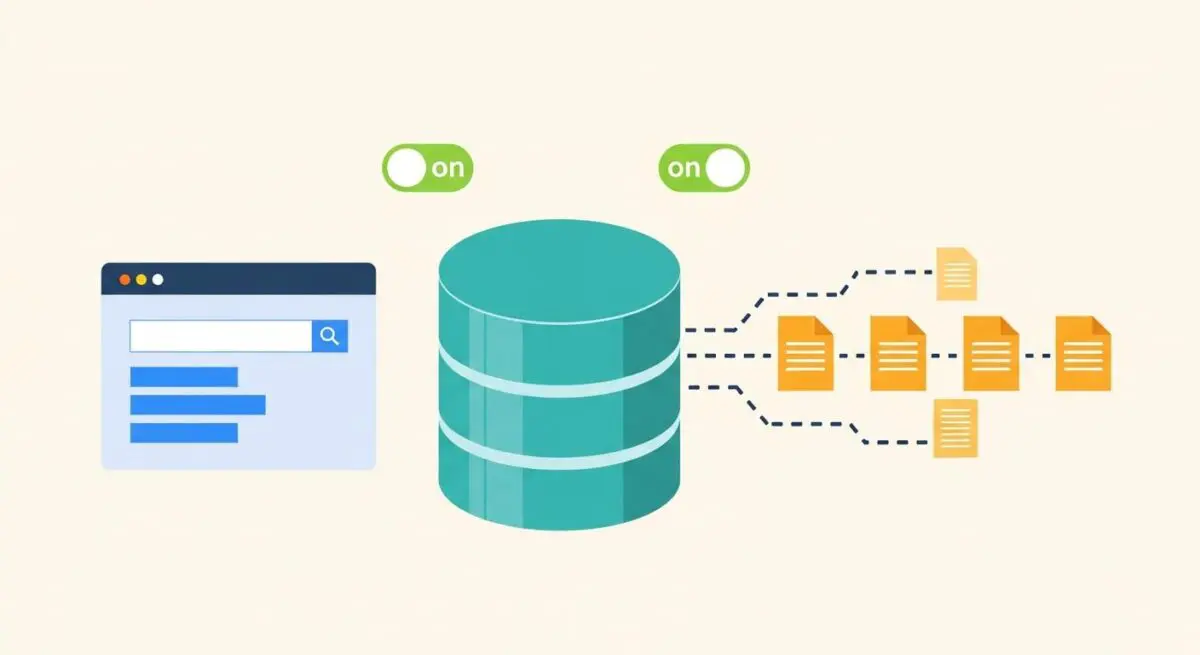

What is Data Warehousing?

Data warehousing is the process of collecting, aggregating, and managing data from multiple disparate sources into a single, centralized repository to support business intelligence (BI) and analytical activities. Unlike operational databases that are optimized for transaction processing (OLTP), a data warehouse is architected for complex querying and analysis (OLAP). It serves as the definitive “source of truth” for an organization, ensuring that data is cleaned, transformed, and categorized before it is utilized for high-level decision-making or automated processing.

In the context of AI and automation, a data warehouse acts as the long-term memory for autonomous agents and data pipelines. It allows for the storage of historical API responses, user interactions, and performance metrics that are too voluminous for standard application databases. By utilizing structured schemas, such as Star or Snowflake schemas, data warehousing facilitates rapid data retrieval, which is essential for training machine learning models and executing large-scale programmatic SEO strategies.

The Real-World Analogy

Imagine a global logistics company that operates thousands of delivery vans, hundreds of warehouses, and millions of individual packages. Each van has a small logbook (an operational database) that tracks its current route and fuel level. However, if the CEO wants to analyze the efficiency of the entire global network over the last five years, they cannot go through every single van’s logbook one by one. Instead, the company sends a copy of every logbook entry to a massive, central high-tech command center (the Data Warehouse). In this command center, all information is standardized, indexed, and filed so that a single analyst can ask a complex question—like “Which routes were most fuel-efficient during winter storms?”—and get an answer in seconds.

Why is Data Warehousing Critical for Autonomous Workflows and AI Content Ops?

Modern AI Content Ops and autonomous workflows rely heavily on stateless automation, where each execution (like a Lambda function or a Webhook) has no memory of previous runs. Data warehousing provides the necessary stateful layer, allowing these workflows to reference historical data to make informed decisions. For instance, an AI agent generating content can query a data warehouse to ensure it does not duplicate topics covered in the previous quarter or to pull specific performance data from past campaigns to optimize current output.

Furthermore, data warehousing mitigates the limitations of API rate limits and data fragmentation. By extracting data from various SaaS tools via ETL (Extract, Transform, Load) pipelines and storing it in a warehouse, organizations can perform cross-platform analysis without constantly hitting external APIs. This is vital for programmatic SEO, where thousands of pages are generated based on complex datasets; having that data pre-processed and localized in a warehouse ensures the generation engine operates at maximum velocity and reliability.

Best Practices & Implementation

- Adopt ELT over ETL for Modern Pipelines: Utilize “Extract, Load, Transform” (ELT) to move raw data into powerful cloud warehouses like BigQuery or Snowflake first, then use the warehouse’s native compute power to transform the data.

- Implement Strict Data Normalization: Ensure that data coming from different APIs (e.g., Google Search Console and HubSpot) is mapped to a consistent format to prevent logic errors in automated workflows.

- Partition and Cluster Data: Organize your tables by date or category to reduce query costs and increase the speed of data retrieval for real-time AI applications.

- Maintain a Data Dictionary: Document every field and table within the warehouse to ensure that developers and AI agents can accurately interpret the schema during automated query generation.

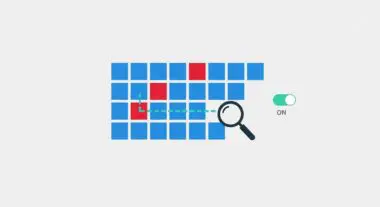

Common Mistakes to Avoid

One frequent error is treating a data warehouse like a Data Lake; failing to enforce a schema leads to a “data swamp” where information is unsearchable and useless for automation. Another mistake is neglecting data latency; if your warehouse only updates once every 24 hours, your autonomous workflows may be operating on stale information, leading to inaccurate AI-generated insights. Finally, many brands fail to monitor query costs, resulting in significant financial overhead when inefficiently written automated scripts perform full-table scans on massive datasets.

Conclusion

Data warehousing is the architectural backbone of scalable AI operations, providing the structured, historical context required for sophisticated autonomous decision-making. By centralizing and optimizing data, organizations can move beyond simple task automation toward complex, data-driven intelligence.