Executive Summary

- ETL facilitates the systematic movement of raw data from disparate sources into a centralized repository, ensuring data integrity through rigorous transformation protocols.

- In AI Content Ops, ETL processes are essential for normalizing unstructured data into machine-readable formats for LLM fine-tuning and Retrieval-Augmented Generation (RAG) architectures.

- Modern ETL pipelines leverage serverless functions and webhooks to automate real-time data synchronization across diverse marketing and SEO tech stacks.

What is ETL?

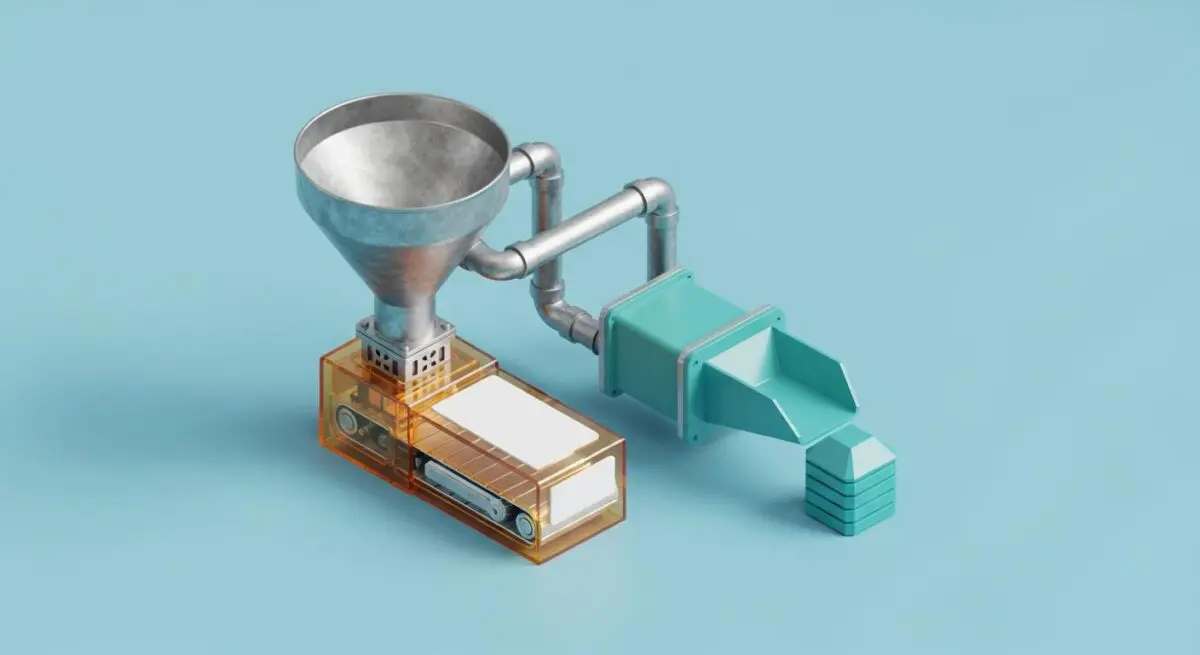

ETL stands for Extract, Transform, and Load. It is a foundational data integration process used to consolidate data from multiple sources into a single, consistent data store, such as a data warehouse or data lake. In the context of AI Automations, ETL serves as the plumbing that ensures high-quality, structured data is available for analysis, reporting, and machine learning model consumption. The process begins with Extraction, where data is retrieved from various sources like SQL databases, CRM platforms, or external APIs. This raw data is often heterogeneous and unstructured.

The second phase, Transformation, is the most critical for automation. During this stage, raw data is cleaned, de-duplicated, and converted into a standardized format. This involves applying business logic, filtering noise, and mapping fields to ensure the output meets the schema requirements of the target system. Finally, the Load phase involves importing the processed data into the destination system. Modern ETL has evolved into ELT (Extract, Load, Transform) in cloud-native environments, but the core objective remains the same: ensuring data readiness for autonomous decision-making engines.

The Real-World Analogy

Imagine a high-end commercial kitchen preparing a signature dish. The Extraction phase is akin to sourcing raw ingredients from various suppliers—farmers, butchers, and fishmongers. These ingredients arrive in different states: unwashed vegetables, whole cuts of meat, and bulk spices. The Transformation phase is the prep work: chefs wash the vegetables, trim the fat, and precisely measure the spices according to a strict recipe. Without this step, the ingredients are unusable for the final dish. Finally, the Load phase is the plating of the meal, where the prepared ingredients are combined and presented to the customer. Just as a chef cannot serve raw, unwashed ingredients, an AI cannot function on raw, uncleaned data.

Why is ETL Critical for Autonomous Workflows and AI Content Ops?

For AI Content Ops and programmatic SEO, ETL is the engine behind data-driven content generation. Autonomous workflows rely on stateless automation, where each step in a process must receive perfectly formatted data to execute correctly. ETL pipelines ensure that JSON payloads sent via webhooks are stripped of unnecessary bloat, reducing latency and API costs. In the era of Generative Engine Optimization (GEO), ETL is used to feed clean, structured data into RAG systems, allowing AI models to generate highly accurate content based on proprietary datasets rather than hallucinating from general knowledge.

Best Practices & Implementation

- Implement Idempotency: Ensure that running the same ETL process multiple times with the same input does not result in duplicate records or corrupted data in the target system.

- Schema Validation: Use automated checks to verify that incoming data matches the expected format before the transformation phase to prevent downstream pipeline failures.

- Incremental Loading: Instead of processing the entire dataset every time, only extract and load data that has changed since the last execution to optimize server resources and reduce API overhead.

- Error Logging and Alerting: Build robust monitoring into the transformation layer to immediately notify engineers when data types mismatch or API connections fail.

- Data Normalization: Standardize all text data (e.g., UTF-8 encoding) and date formats (ISO 8601) to ensure compatibility across different AI tools and search engine crawlers.

Common Mistakes to Avoid

One frequent error is hardcoding transformation logic within the automation script, which makes the pipeline brittle and difficult to scale as data sources evolve. Another common mistake is neglecting data quality at the source; ETL cannot fix fundamentally broken data, leading to the “garbage in, garbage out” scenario in AI outputs. Finally, many brands fail to account for API rate limits during the extraction phase, leading to incomplete data transfers and broken autonomous workflows.

Conclusion

ETL is the essential framework for converting raw data into actionable intelligence, serving as the backbone for scalable AI content operations and robust automation architectures.