Executive Summary

- Throughput measures the actual volume of data successfully transferred over a network per unit of time, distinct from theoretical bandwidth.

- High throughput is essential for optimizing the Largest Contentful Paint (LCP) metric by accelerating the delivery of heavy media assets.

- Performance engineering must account for factors like latency, packet loss, and protocol overhead which can bottleneck throughput despite high bandwidth.

What is Throughput?

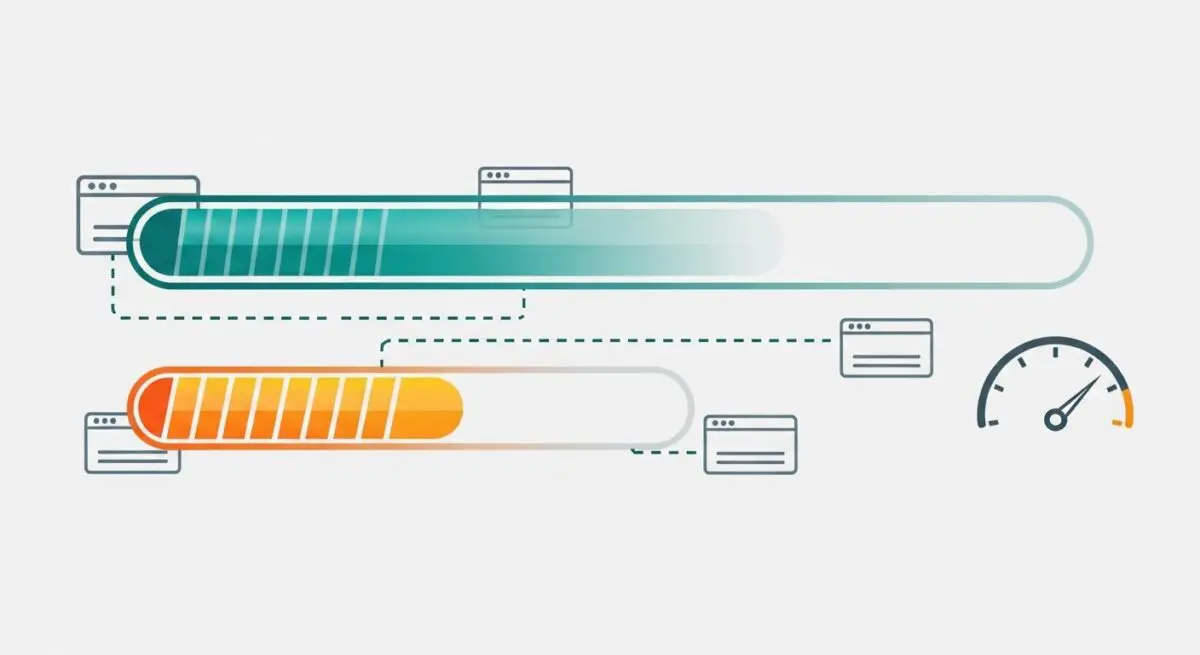

In the context of website performance and network engineering, throughput is the actual rate at which data is successfully transferred from a source (such as a web server or CDN) to a destination (the user’s browser) over a specific period. While often confused with bandwidth, throughput represents the real-world performance of a connection, accounting for overhead, latency, and packet loss. It is typically measured in bits per second (bps), megabits per second (Mbps), or gigabits per second (Gbps).

From a technical perspective, throughput is influenced by the entire network stack, including the physical medium, the efficiency of the transport layer protocols (like TCP or QUIC), and the processing power of the network nodes. In web performance architecture, we analyze throughput to understand how effectively a server can push resources to a client. A high-bandwidth connection may still suffer from low throughput if the server is overloaded or if the network path is congested, leading to delayed rendering and poor user experiences.

The Real-World Analogy

To understand throughput, imagine a large water pipe connecting a reservoir to a house. The bandwidth is the diameter of the pipe—it represents the maximum amount of water that could theoretically flow through at once. However, the throughput is the actual amount of water that reaches the faucet every second. If there is debris in the pipe (network congestion), leaks (packet loss), or if the pump is weak (server limitations), the throughput will be significantly lower than the pipe’s theoretical capacity. Even with a massive pipe, you only care about how fast the water actually fills your glass.

Why is Throughput Critical for Website Performance and Speed Engineering?

Throughput is a primary driver of the Largest Contentful Paint (LCP), one of Google’s Core Web Vitals. For modern websites that rely on high-resolution images, video backgrounds, and large JavaScript bundles, the speed at which these assets are delivered is dictated by the available throughput. If throughput is constrained, the browser must wait longer to receive the final bits of a resource, delaying the rendering process and increasing the perceived load time.

Furthermore, throughput impacts the efficiency of Server Response Times and the overall utilization of modern protocols like HTTP/3. In a high-throughput environment, the browser can download multiple resources in parallel more effectively, reducing the total time spent in the “loading” state. For enterprise-level hosting, maintaining consistent throughput ensures that the site remains performant even during traffic spikes, preventing the “bottleneck” effect where server resources are available but the network delivery path fails to keep up.

Best Practices & Implementation

- Implement Modern Compression: Use Brotli or Gzip compression to reduce the total payload size. By sending fewer bits, you effectively increase the functional throughput of the connection.

- Leverage HTTP/3 (QUIC): Transition to HTTP/3 to reduce the impact of packet loss. Unlike TCP, QUIC handles packet loss more gracefully, preventing a single lost packet from stalling the entire throughput of the connection (Head-of-Line blocking).

- Utilize a Global Content Delivery Network (CDN): CDNs place content closer to the user at the “edge.” This reduces the number of network hops and minimizes latency, which allows the TCP window to scale faster and reach peak throughput more quickly.

- Optimize TCP Window Scaling: Ensure your server configuration allows for TCP window scaling. This enables the server to send larger amounts of data before requiring an acknowledgment from the client, maximizing throughput on high-latency connections.

Common Mistakes to Avoid

A frequent error is equating high bandwidth with high performance. Brands often invest in expensive hosting with high bandwidth limits while ignoring high latency or poor peering, which results in low actual throughput. Another mistake is failing to optimize the Critical Rendering Path; if a site has low throughput, loading non-essential heavy scripts early will starve the bandwidth needed for the LCP element, leading to a poor user experience despite a fast connection.

Conclusion

Throughput is the definitive measure of data delivery efficiency in web performance engineering. By focusing on maximizing throughput through protocol optimization and payload reduction, developers can ensure rapid asset delivery and superior Core Web Vitals scores.