Executive Summary

- Algorithmic Qubit (#AQ) Benchmarking: The industry has shifted from raw qubit counts to logical qubit yield, with fault-tolerant systems like Quantinuum’s Helios and Google’s Willow achieving error rates as low as 0.000015%.

- Post-Quantum Cryptography (PQC) Mandates: The NIST FIPS 140-3 deadline of September 2026 has catalyzed a $15B migration industry, forcing a transition to ML-KEM standards across global supply chains.

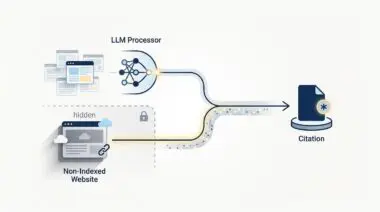

- Harvest Now, Decrypt Later (HNDL): Strategic risk management now prioritizes the mitigation of retrospective decryption threats, where legacy data intercepted today is vulnerable to future quantum-capable adversaries.

The Post-Hype Correction: Quantum Reality in 2026

The landscape of quantum computing has transitioned from speculative venture capital cycles to what analysts now define as the Fault-Tolerant Foundation Era. As of mid-2026, the conversation among C-suite executives has shifted from if quantum computing will disrupt cybersecurity to how rapidly the transition to Post-Quantum Cryptography (PQC) can be executed. This shift is driven by a bifurcation in the market between superconducting modalities, led by IBM and Google, and alternative scaling solutions such as trapped-ion and photonic systems from IonQ and PsiQuantum.

The strategic importance of this technology lies not just in its computational speed, but in its ability to render current asymmetric encryption standards—specifically RSA and Elliptic Curve Cryptography (ECC)—obsolete. For the modern enterprise, the risk is no longer a distant theoretical; it is an operational imperative. The emergence of the Hybrid Quantum-Classical (HQC) standard has integrated Quantum Processing Units (QPUs) directly with GPU clusters, enabling a level of cryptographic pressure that legacy infrastructure was never designed to withstand.

Defining the Quantum Threat to Cryptographic Integrity

To understand the strategic risk, one must define the mechanism of disruption. Quantum computing utilizes the principles of superposition and entanglement to perform calculations that are functionally impossible for classical binary systems. While classical computers process bits as either zeros or ones, quantum bits, or qubits, exist in a probabilistic state of both simultaneously. This allows for the execution of Shor’s Algorithm, a mathematical framework capable of factoring large integers—the very foundation of modern encryption—at exponential speeds.

In the context of cybersecurity, this creates a binary vulnerability. Any data protected by standard public-key infrastructure is susceptible to decryption once a quantum computer reaches a sufficient threshold of logical qubits. While we are currently in the era of NISQ (Noisy Intermediate-Scale Quantum) devices, the move toward fault tolerance means the window for cryptographic migration is closing faster than many legacy organizations anticipated.

Market Leadership and the Ecosystem of Resilience

The quantum ecosystem in 2026 is characterized by intense consolidation and a shift toward full-stack platforms. IonQ’s recent pivot, supported by $2.5B in strategic acquisitions including ID Quantique, demonstrates a move to own the entire vertical from quantum sensing to secure communication. Similarly, D-Wave’s acquisition of Quantum Circuits Inc. (QCI) has created a dual-platform leader capable of handling both optimization problems via annealing and general-purpose computation via gate-model systems.

Valuation drivers for these firms have matured. Investors no longer reward raw qubit counts. Instead, the industry has adopted Algorithmic Qubits (#AQ) and logical qubit yield as the primary metrics of performance. Quantinuum’s 2026 IPO, valued at over $20B, was predicated on its ability to demonstrate 48 logical qubits on the Helios system—a benchmark that signals the beginning of reliable, error-corrected computation. For the enterprise, this means the tools required to break classical encryption are moving from the laboratory to the cloud-integrated data center.

Infrastructure Pivot: Hybrid Architectures and Agentic Orchestration

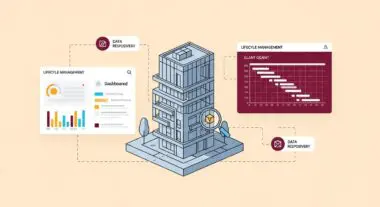

The technical response to the quantum threat is the implementation of Quantum-Centric Supercomputing. IBM’s Kookaburra architecture, featuring over 4,000 qubits, utilizes sub-microsecond middleware to orchestrate workloads between classical and quantum nodes. This hybrid approach is essential for the deployment of PQC-safe AI agents. These autonomous systems, built on stacks like DuploCloud or IBM Watsonx, now include a Quantum-Safe Orchestration Layer.

These agents utilize the Model Context Protocol (MCP) to negotiate encrypted handshakes between edge nodes and quantum-resistant cloud kernels. By automating the re-keying process using NIST-approved algorithms like ML-KEM (FIPS 203), organizations have reduced Security Operations Center (SOC) breakout response times from 30 minutes to under 5 minutes. This level of automation is not merely an efficiency gain; it is a requirement for defending against quantum-accelerated adversarial attacks.

The transition to quantum-resistant infrastructure is akin to replacing the foundation of a skyscraper while the building is still occupied; the complexity lies not in the new materials, but in the seamless transfer of structural integrity without a single second of collapse.

The Economic Friction of Migration

Despite the technological advancements, an execution gap persists. While 62% of enterprises report hitting the limits of classical optimization, only 13% have successfully transitioned quantum pilot projects into full production. The primary bottleneck is a critical shortage of Quantum-Classical Systems Engineers—specialists who understand both the physics of QPU-resident algorithms and the rigors of PQC migration.

Furthermore, the Harvest Now, Decrypt Later (HNDL) risk remains the most significant strategic blind spot. Adversaries are currently intercepting and storing encrypted traffic with the intent of decrypting it once fault-tolerant quantum computers are commercially available. For industries with long-tail data sensitivity, such as healthcare, defense, and national infrastructure, the breach has effectively already occurred if they have not yet migrated to PQC. The cost of this migration is substantial; US Federal estimates suggest a $7.1B expenditure through 2035, with private sector firms allocating 2–5% of their total IT security budgets to address this transition.

Regulatory Catalysts and the FIPS 140-3 Mandate

The regulatory environment has reached a tipping point with the NIST FIPS 140-3 validation deadline on September 21, 2026. This mandate requires all new Federal procurements to utilize validated modules that incorporate Post-Quantum Cryptography. This has effectively forced the hand of the global supply chain. Any vendor providing services to the US government, or to the prime contractors of the government, must now demonstrate quantum resilience.

Parallel mandates in the European Union require national PQC transition plans to be finalized by the end of 2026. These regulations are transforming PQC from a compliance checkbox into a competitive advantage. Financial institutions that have adopted “Verified Quantum Resilience” branding are already seeing a 9% increase in Customer Lifetime Value (LTV) among high-net-worth segments who prioritize long-term data sovereignty.

Andres’ Executive Analysis: The Quantum Moat

In my analysis of the current market trajectory, the most significant takeaway for leadership is that quantum resilience is becoming the ultimate competitive moat. We are seeing a divergence where early adopters are not just securing their data, but are also leveraging quantum-accelerated vector search to achieve 10x improvements in query resolution over traditional RAG models. This is no longer just a security play; it is an operational efficiency play that fundamentally alters the unit economics of data processing.

We advise our clients to view the NIST FIPS 140-3 deadline not as a hurdle, but as a catalyst for a broader infrastructure overhaul. The organizations that will thrive in the next decade are those that treat cryptographic agility as a core business logic. By decoupling security protocols from hardware-specific limitations and moving toward hardware-agnostic virtualization—using stacks like Xanadu’s PennyLane—you ensure that your enterprise remains resilient regardless of which quantum modality eventually dominates the market. The goal is to build a system that is as fluid as the technology it seeks to harness.

Securing the Future of the Enterprise

The intersection of quantum computing and cybersecurity represents one of the most profound shifts in the history of information technology. As we move past the hype and into the era of fault-tolerant systems, the focus must remain on strategic migration, capital allocation toward PQC, and the integration of hybrid quantum-classical workflows. The window for proactive defense is narrowing, and the cost of delay is the total compromise of legacy data assets.

Navigating the intersection of generative search and operational efficiency requires more than just tools—it requires a roadmap. If you’re ready to evolve your strategy through specialized SEO, GEO, or AI-driven automation, connect with Andres at Andres SEO Expert. Let’s build a future-proof foundation for your business together.