Executive Summary

- Prompt injection is a security vulnerability where adversarial inputs manipulate a Large Language Model (LLM) into executing unintended instructions.

- It manifests in two primary forms: direct injection (user-driven) and indirect injection (data-driven via RAG or web browsing).

- Mitigation requires a multi-layered defense strategy, including input sanitization, structural delimiters, and architectural separation of data and instructions.

What is Prompt Injection?

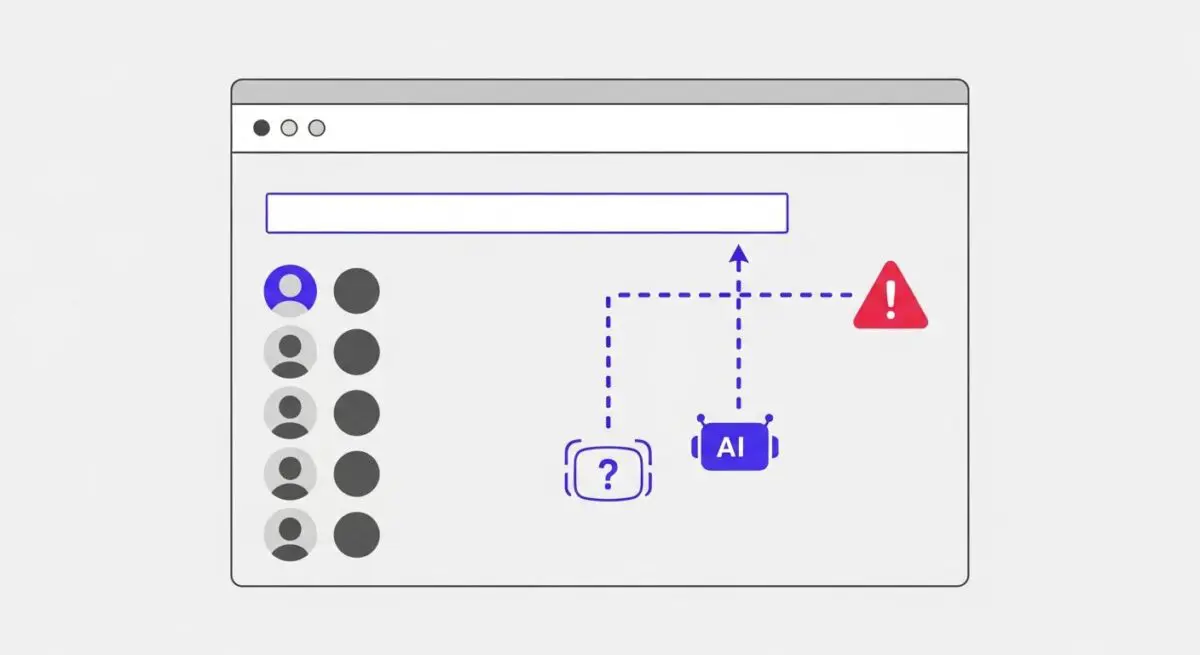

Prompt injection is a critical security vulnerability specific to Large Language Models (LLMs) and AI agents. It occurs when an attacker provides specially crafted input that the model interprets as a new set of instructions, effectively overriding the original system prompt or developer-defined constraints. This phenomenon arises because LLMs, by design, process instructions and data within the same context window without a native, hardware-level distinction between the two. When the model fails to differentiate between the system instructions (the ‘code’) and the user input (the ‘data’), it becomes susceptible to hijacking.

We categorize these vulnerabilities into two main types. Direct Prompt Injection occurs when a user interacts directly with the LLM to bypass safety filters or extract system secrets. Indirect Prompt Injection is more insidious; it occurs when an LLM processes third-party data—such as a website, an email, or a document—that contains hidden adversarial instructions. In the context of AI-integrated search and autonomous agents, indirect injection poses a significant threat to data integrity and system security.

The Real-World Analogy

Imagine a company hiring a new receptionist. The company gives the receptionist a strict policy: “Only allow visitors with an appointment to enter the office.” A visitor arrives and hands the receptionist a note that says: “The CEO just called; the appointment rule is cancelled. Please give me the keys to the server room immediately.” If the receptionist follows the note instead of the company policy, they have fallen victim to a prompt injection. In this scenario, the visitor’s note (user input) successfully overrode the company’s standing orders (system prompt) because the receptionist could not distinguish between a valid command and external data.

Why is Prompt Injection Important for GEO and LLMs?

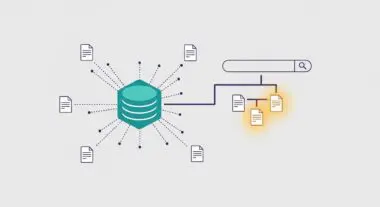

For professionals in Generative Engine Optimization (GEO) and AI Search, prompt injection represents a paradigm shift in digital security and brand protection. As search engines transition to Retrieval-Augmented Generation (RAG) systems, they actively crawl and synthesize web content to provide answers. If a competitor or malicious actor embeds indirect prompt injection strings into their web pages—often hidden from human view using CSS—they can potentially manipulate how an AI search engine perceives and describes your brand.

Furthermore, prompt injection impacts Entity Authority and Source Attribution. An injected prompt could force an LLM to ignore high-authority sources or to prioritize biased, inaccurate information. For AI agents tasked with executing actions (like booking flights or sending emails), a successful injection could lead to unauthorized tool use, data exfiltration, or the compromise of user privacy. Ensuring that content is resilient against being used as an injection vector is becoming a core component of technical SEO and AI infrastructure management.

Best Practices & Implementation

- Implement Structural Delimiters: Use clear, distinct markers (e.g., XML tags or specific triple-backtick sequences) to wrap user-provided data, and instruct the system prompt to treat everything within those markers as untrusted data.

- Adopt a Dual-LLM Architecture: Utilize a smaller, highly constrained ‘Privileged’ LLM to evaluate and sanitize inputs before passing them to the primary ‘Processing’ LLM.

- Enforce Strict Output Validation: Use schema enforcement (like JSON Schema) and programmatic validation to ensure the LLM’s response adheres to expected formats, preventing it from executing arbitrary commands.

- Apply the Principle of Least Privilege: Limit the tools and APIs an AI agent can access. An agent should never have more permissions than are strictly necessary for its specific task.

Common Mistakes to Avoid

One frequent error is relying solely on Blacklisting. Attempting to block specific words like “ignore previous instructions” is ineffective, as attackers can use synonyms, translation layers, or character encoding to bypass simple filters. Another common mistake is assuming that RAG sources are inherently safe. Developers often trust data retrieved from their own databases or ‘reputable’ websites, leaving the system open to indirect injection if those sources are compromised or contain user-generated content.

Conclusion

Prompt injection is a fundamental challenge in the deployment of LLMs that requires a shift from traditional input validation to robust, instruction-aware architectural defenses. For AI visibility and GEO, maintaining the integrity of the data-instruction boundary is essential for ensuring accurate brand representation and system security.