Executive Summary

- Model hallucination is a probabilistic phenomenon where Large Language Models (LLMs) generate syntactically plausible but factually incorrect or nonsensical information.

- This behavior poses a significant risk to Generative Engine Optimization (GEO) by compromising source attribution and factual reliability in AI-generated search results.

- Technical mitigation involves implementing Retrieval-Augmented Generation (RAG), optimizing system prompts, and calibrating model temperature to ensure grounding in verified data.

What is Model Hallucination?

Model hallucination, more accurately termed confabulation, refers to the phenomenon where a Large Language Model (LLM) generates output that is grammatically correct and semantically coherent but factually inaccurate or disconnected from its training data. This occurs because LLMs are fundamentally probabilistic engines designed to predict the next most likely token in a sequence based on statistical patterns rather than querying a structured database of facts. When the model encounters a prompt for which it lacks sufficient data or when the internal weights prioritize linguistic fluidity over factual precision, it constructs a response that sounds authoritative but is entirely fabricated.

From a technical standpoint, hallucinations are often categorized into two types: intrinsic hallucinations, where the output contradicts the provided source material, and extrinsic hallucinations, where the output includes information that cannot be verified from the source or training data. In the context of AI search and Generative Engine Optimization (GEO), these errors can lead to the dissemination of misinformation, the creation of non-existent citations, and the degradation of the user experience within generative environments like Perplexity, Gemini, or ChatGPT.

The Real-World Analogy

Imagine an overconfident, highly articulate witness in a courtroom who, when asked a specific question they do not know the answer to, refuses to admit ignorance. Instead, they use their knowledge of how legal testimony usually sounds to improvise a detailed, persuasive story. They use the correct legal terminology and speak with absolute conviction, leading the jury to believe them, even though every detail of their story is a fiction constructed on the fly to fill the silence. The witness isn’t lying with intent; they are simply performing the role of a witness so well that they prioritize the form of the answer over the truth of the content.

Why is Model Hallucination Important for GEO and LLMs?

In the era of Generative Engine Optimization (GEO), model hallucination is a critical variable that dictates Entity Authority and Source Attribution. If an LLM hallucinates details about a brand—such as non-existent product features, incorrect pricing, or false reviews—it directly impacts the brand’s digital reputation and conversion rates. For AI search engines that rely on Retrieval-Augmented Generation (RAG), hallucinations can occur if the model fails to correctly synthesize the retrieved snippets, leading to “source drift” where the AI attributes a fact to a website that does not actually contain that information.

Furthermore, high hallucination rates reduce the Perplexity and Burstiness scores that some AI detectors and search algorithms use to evaluate content quality. For SEO professionals and developers, minimizing the likelihood of an LLM hallucinating about their content is essential for maintaining visibility in AI-driven summaries. Ensuring that data is structured, verifiable, and easily accessible to crawlers reduces the “cognitive load” on the model, thereby decreasing the probability of a confabulated output.

Best Practices & Implementation

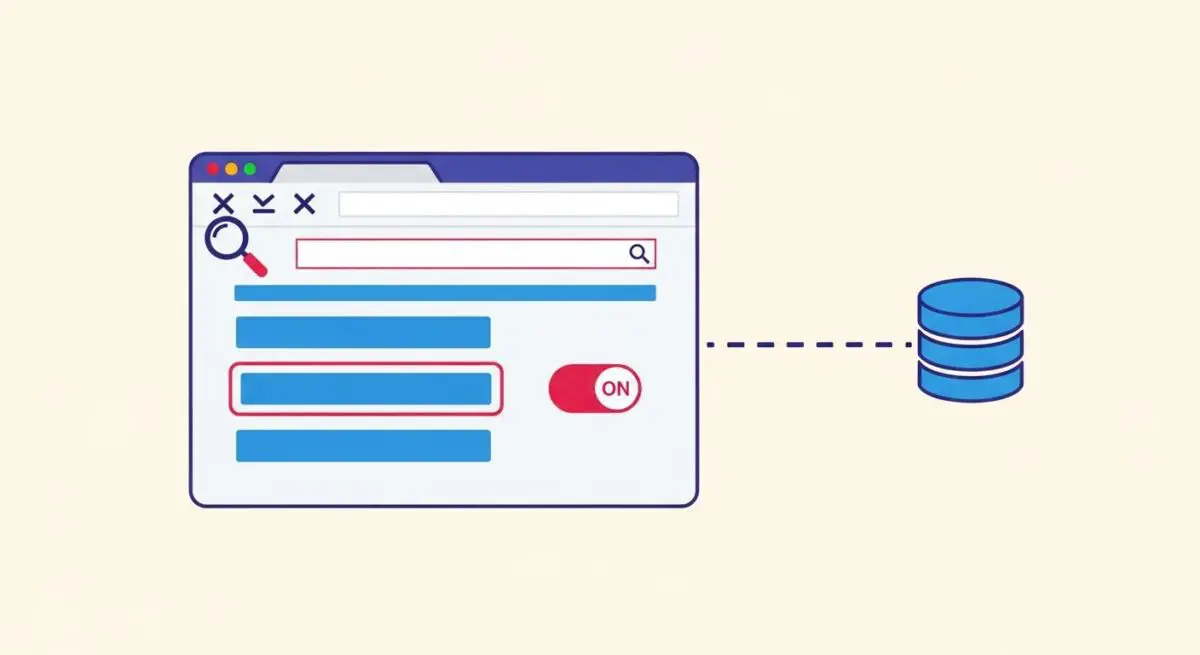

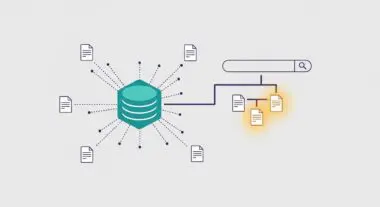

- Implement Retrieval-Augmented Generation (RAG): Ground the LLM by providing a specific, verified context from an external knowledge base before the generation phase, forcing the model to cite provided documents.

- Optimize Temperature Settings: Lower the model’s temperature parameter (e.g., to 0.1 or 0.2) for tasks requiring high factual accuracy to reduce stochasticity and favor the most probable, grounded tokens.

- Utilize Chain-of-Thought (CoT) Prompting: Structure prompts to require the model to explain its reasoning step-by-step, which has been shown to reduce logical leaps and factual errors.

- Incorporate Self-Consistency Checks: Run multiple iterations of the same prompt and use a secondary “judge” model to verify the consistency and factual alignment of the outputs.

- Structured Data Markup: Use Schema.org and highly organized HTML structures to ensure that when an AI agent crawls your site, the relationship between entities is unambiguous.

Common Mistakes to Avoid

A frequent error is treating an LLM as a traditional search engine or a database; users often expect it to have real-time factual recall without providing a retrieval mechanism. Another mistake is using overly creative or ambiguous system prompts, which increases the model’s “creative freedom” and, by extension, its propensity to hallucinate. Finally, many brands fail to monitor their “AI footprint,” neglecting to check how generative engines are summarizing their data, which allows hallucinations to persist and spread across the AI ecosystem.

Conclusion

Model hallucination is an inherent byproduct of the probabilistic nature of generative AI, necessitating rigorous technical safeguards like RAG and precise prompt engineering to ensure factual integrity in AI search results.