Executive Summary

- Systematic optimization of input queries to influence LLM output accuracy and source attribution.

- Strategic use of context injection to ensure brand visibility within Retrieval-Augmented Generation (RAG) workflows.

- Refinement of semantic structures to align content with the probabilistic patterns of generative search engines.

What is Prompt Engineering (for SEO)?

Prompt Engineering (for SEO) is the technical discipline of structuring information and queries to guide Large Language Models (LLMs) toward generating specific, accurate, and brand-aligned outputs. Unlike traditional keyword optimization, this practice focuses on the linguistic and semantic nuances that influence how generative engines like GPT-4, Claude, and Gemini retrieve and synthesize data. It involves understanding the underlying architecture of transformer models to ensure that brand-specific data is prioritized during the inference phase.

In the landscape of Generative Engine Optimization (GEO), prompt engineering extends beyond the user-facing chat interface. It encompasses the optimization of content so that when an AI search engine constructs an internal prompt to answer a user query, your data is perceived as the most relevant and authoritative context. This requires a deep understanding of tokenization, attention mechanisms, and the way LLMs weigh different sources of information within their context windows.

The Real-World Analogy

Imagine a master librarian who has read every book in existence but requires a specific set of instructions to find the exact information you need. If you ask a vague question, the librarian provides a general summary. However, if you provide a detailed brief—specifying the tone, the exact sources to prioritize, and the required format—the librarian delivers a precise, high-value report. Prompt Engineering for SEO is the act of writing that detailed brief for the AI, ensuring your brand’s books are the ones the librarian reaches for first.

Why is Prompt Engineering (for SEO) Important for GEO and LLMs?

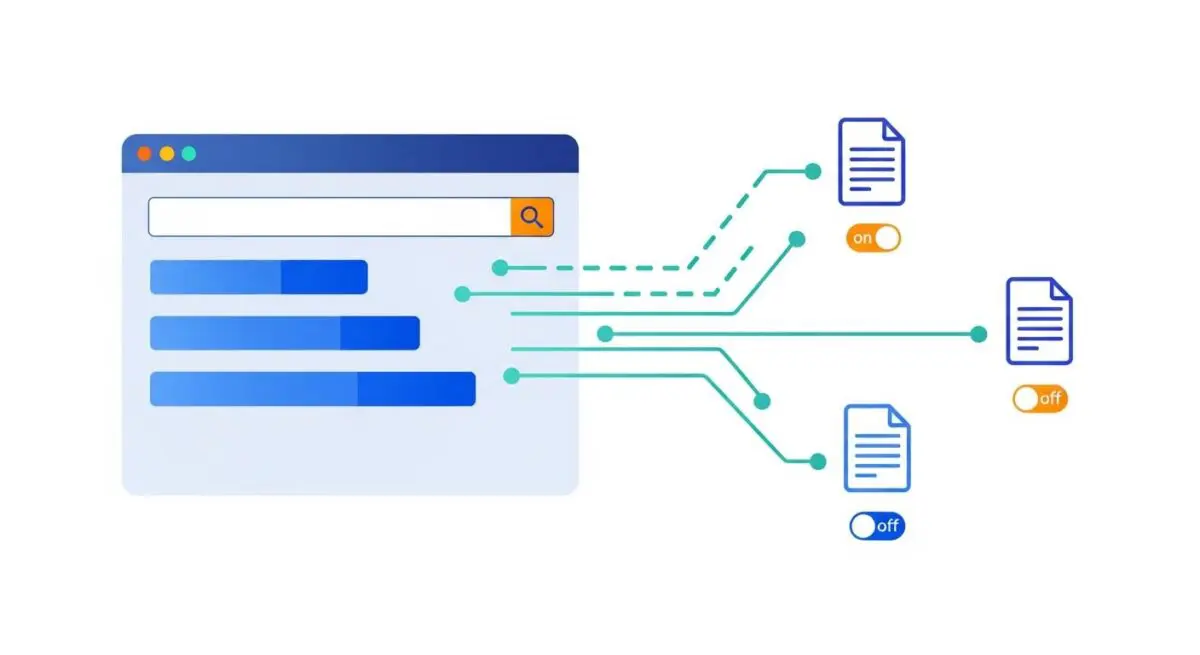

Prompt engineering is the primary lever for influencing AI visibility and source attribution. In RAG-based systems (Retrieval-Augmented Generation), the AI search engine first retrieves relevant documents and then prompts itself to summarize them. By optimizing content to align with these internal prompts, brands can increase the probability of being cited as a primary source. This directly impacts rankings in platforms like Perplexity and ChatGPT, where authority is derived from the model’s ability to parse and synthesize your content effectively.

Furthermore, prompt engineering helps mitigate the risk of AI hallucinations. By providing clear, structured, and semantically rich data, we at Andres SEO Expert ensure that LLMs have a high-confidence path to the correct information. This strengthens entity authority, as the model consistently associates your brand with accurate, verifiable facts across multiple query iterations.

Best Practices & Implementation

- Semantic Triplets: Structure your data using Subject-Predicate-Object formats to help LLMs easily map relationships between entities.

- Contextual Anchoring: Use highly descriptive headers and metadata that mirror the likely internal queries used by generative engines during the retrieval phase.

- Information Density Optimization: Eliminate filler words and maximize the per-token value of your content to ensure it fits efficiently within the AI’s limited context window.

- Structured Data Integration: Implement advanced Schema.org markups to provide a machine-readable layer that acts as a pre-processed prompt for the AI.

Common Mistakes to Avoid

One frequent error is the use of ambiguous language that leads to prompt drift, where the AI loses focus on the primary intent and provides irrelevant citations. Another common mistake is ignoring the System Prompt constraints; brands often fail to realize that AI engines have built-in instructions to prioritize objectivity, and overly promotional content is often filtered out during the synthesis stage.

Conclusion

Prompt Engineering for SEO is a critical component of modern GEO, shifting the focus from keyword density to semantic relevance and model alignment. Mastering this discipline ensures that your brand remains the authoritative source in an AI-driven search ecosystem.