Executive Summary

- JSON payload parsing is the technical process of deserializing structured data into objects that Large Language Models (LLMs) can interpret and process.

- Efficient parsing is critical for Retrieval-Augmented Generation (RAG) systems to ensure high-fidelity data retrieval and minimize token overhead.

- Proper implementation directly impacts Generative Engine Optimization (GEO) by improving entity clarity and source attribution accuracy.

What is JSON Payload Parsing?

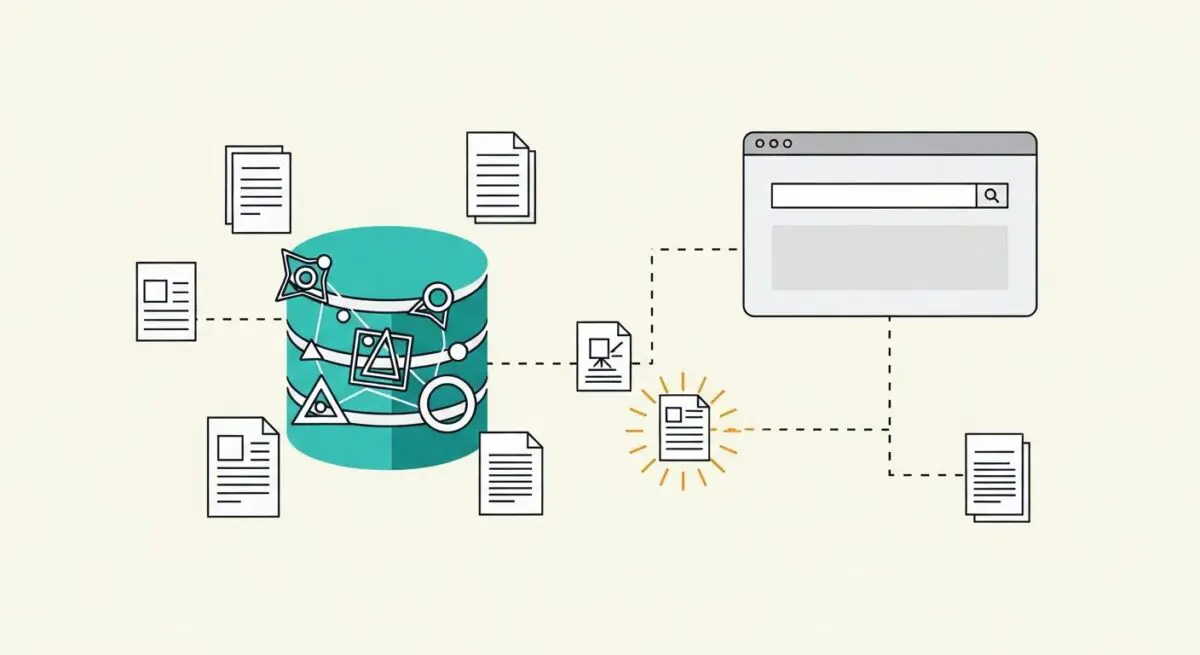

JSON (JavaScript Object Notation) payload parsing is the computational process of converting a serialized string of data into a structured format, such as an object or dictionary, that a software application or Large Language Model (LLM) can programmatically manipulate. In the context of AI infrastructure, this involves receiving a data packet via an API or database query and decomposing it into its constituent key-value pairs. This step is foundational for ensuring that the information provided to an AI agent is syntactically correct and semantically accessible.

For AI-driven systems, parsing extends beyond simple deserialization; it involves schema validation and data normalization. By verifying that the incoming JSON adheres to a predefined structure, developers ensure that RAG (Retrieval-Augmented Generation) pipelines receive clean, predictable inputs. This reduces the risk of runtime errors and ensures that the LLM can accurately map specific data points to the user’s query intent, which is vital for maintaining high-performance AI search results.

The Real-World Analogy

Imagine receiving a flat-packed piece of furniture from a global retailer. The box itself is the JSON payload—a compact, standardized way to transport all the necessary components. However, you cannot use the furniture while it is still in the box. Parsing is the act of unboxing the components, identifying each screw and panel according to the manual, and laying them out systematically. Only after this parsing process is complete can the builder (the AI) efficiently assemble the pieces into a functional item. Without proper unboxing and identification, the assembly fails or results in an unstable structure.

Why is JSON Payload Parsing Important for GEO and LLMs?

In the landscape of Generative Engine Optimization (GEO), JSON payload parsing serves as the bridge between raw web data and the structured knowledge graphs used by generative AI. When search engines like Perplexity or Google’s Search Generative Experience (SGE) crawl and ingest data, they rely on structured formats to identify entities, relationships, and attributes. If a payload is poorly parsed or contains malformed JSON, the AI may fail to attribute information correctly, leading to a loss in visibility or hallucinated associations.

Furthermore, efficient parsing impacts tokenization and context window management. LLMs have finite limits on the amount of data they can process at once. By parsing and filtering JSON payloads to include only the most relevant fields, developers can optimize token usage, reduce latency, and improve the precision of the model’s response. This technical hygiene is essential for maintaining high authority and ensuring that an organization’s data is accurately represented in AI-generated summaries.

Best Practices & Implementation

- Implement Strict Schema Validation: Use tools like JSON Schema to validate payloads before they reach the LLM, ensuring all required fields are present and correctly typed.

- Minimize Nesting Depth: Keep JSON structures relatively flat to reduce parsing complexity and optimize the token count required for the AI to understand the hierarchy.

- Normalize Data Keys: Ensure consistent naming conventions across all payloads to facilitate better entity recognition and mapping by AI agents.

- Sanitize and Encode: Always use UTF-8 encoding and sanitize inputs to prevent parsing errors caused by special characters or hidden control codes.

- Prune Redundant Metadata: Strip away unnecessary headers or internal tracking IDs that do not contribute to the semantic value of the content before passing it to the inference engine.

Common Mistakes to Avoid

One frequent error is the inclusion of bloated payloads, where excessive metadata consumes the LLM’s context window, diluting the actual signal. Another common mistake is failing to handle parsing exceptions gracefully; if a single comma is missing in a JSON string, the entire data ingestion process can fail, leading to gaps in the AI’s knowledge base. Finally, many organizations neglect to update their parsing logic when API schemas evolve, resulting in stale or mismatched data being fed into RAG systems.

Conclusion

JSON payload parsing is a critical technical prerequisite for high-fidelity AI search and RAG systems, directly influencing data integrity and GEO performance.