Executive Summary

- Agentic SOC Integration: Market leaders like CrowdStrike and Palo Alto Networks are shifting toward Agentic SOC architectures to automate threat triage, though this introduces new vulnerabilities via the Model Context Protocol (MCP).

- The Latency-Security Paradox: High-fidelity safety models such as Llama Guard introduce over 520ms of latency, forcing a strategic trade-off between real-time agentic performance and robust security guardrails.

- Economic Risk Mitigation: With AI-related breach costs exceeding $10M, enterprises are prioritizing AI Security Posture Management (AI-SPM) to achieve a projected 250% ROI through hardened infrastructure.

The New Frontier of Enterprise Vulnerability

The 2026 digital ecosystem has made one thing clear: LLMs are no longer an optional upgrade. They have become the core engine driving business logic, moving from a tactical edge to a fundamental requirement for market viability. However, this rapid adoption has birthed a sophisticated class of cyber threats, chief among them being prompt injection. For the modern executive, understanding this risk is not merely a technical necessity but a fiduciary responsibility. The transition to agentic workflows—where AI systems possess the autonomy to interact with SaaS tools, databases, and customer interfaces—has expanded the attack surface beyond traditional perimeter defenses.

The market is responding with significant capital allocation. We have observed a massive consolidation wave, with incumbents like SentinelOne and F5 absorbing specialist AI safety startups in deals totaling over $1.2 billion. ServiceNow alone has committed billions to security-centric AI infrastructure. This influx of capital underscores a critical realization: the value of an AI system is inextricably linked to its integrity. Without robust defense mechanisms, the very tools designed to drive efficiency can be weaponized against the enterprise.

Defining Prompt Injection in a Business Context

At its core, prompt injection is a linguistic exploit where an attacker provides specifically crafted input that misleads an AI into ignoring its original instructions and executing unauthorized commands. Unlike traditional code injection, which targets syntax, prompt injection targets the semantic logic of the model. In a business environment, this might look like an AI customer service agent being tricked into revealing proprietary pricing data or a financial analysis bot being redirected to exfiltrate sensitive quarterly projections to an external server. It is a fundamental subversion of the intended governance framework of the machine.

The Rise of Agentic Orchestration and Indirect Risks

The industry has coalesced around the Model Context Protocol (MCP) as the standard for connecting AI agents to external tools like Slack, Jira, and email. While MCP facilitates seamless automation, it has introduced the Zero-Click Agentic Vulnerability. This occurs when an agent ingests malicious payloads hidden within trusted data streams. For example, an AI agent summarizing a thread in a project management tool might encounter a hidden instruction in a comment that triggers a data export. This is known as indirect prompt injection, and it represents a significant shift in the threat model, as the malicious actor does not need direct access to the AI interface.

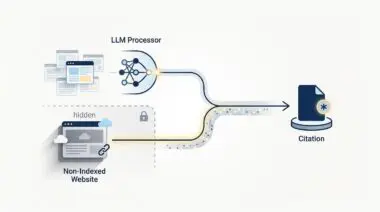

Furthermore, the emergence of Generative Engine Optimization (GEO) has created new vectors for exploitation. While businesses use schema markup and specialized text files to ensure AI crawlers accurately represent their brand, attackers are using these same layers to hide adversarial instructions. These instructions, often invisible to human eyes but legible to LLMs, can hijack the retrieving agent, leading to brand reputation damage or the dissemination of misinformation.

Prompt injection is akin to a high-security vault where the lock is perfectly forged, but the vault door is programmed to open whenever someone whispers a specific, clever phrase through the keyhole. It is a failure of logic, not a failure of hardware.

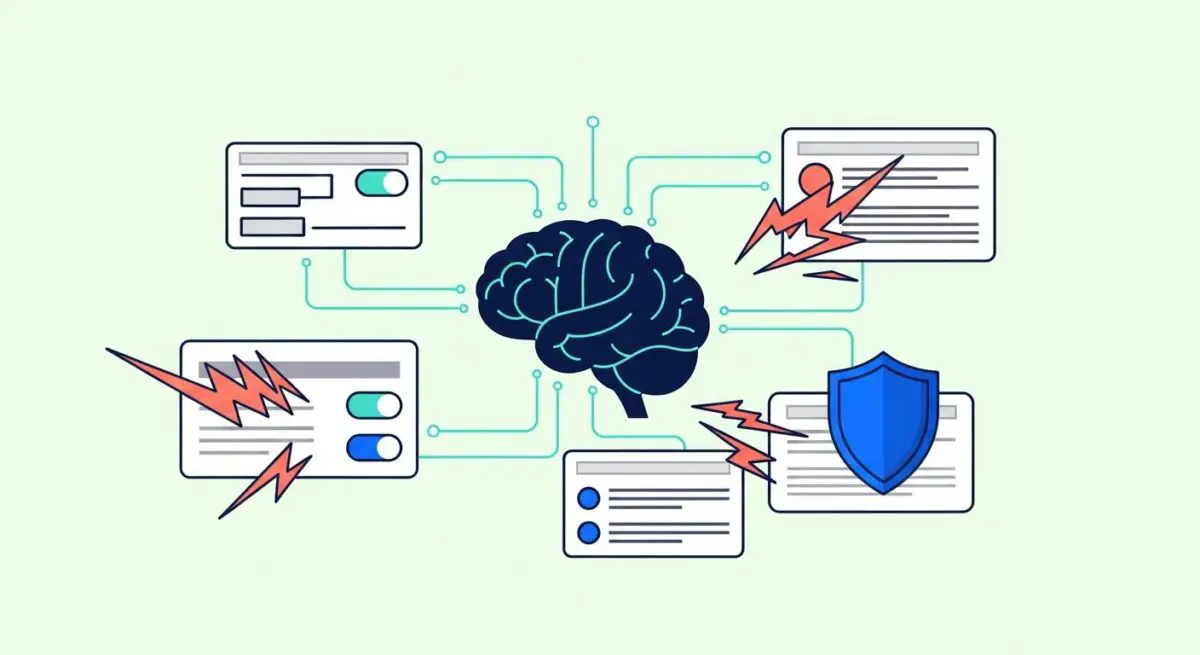

The Latency-Security Paradox and Operational Friction

One of the primary hurdles in scaling AI defense is the inherent trade-off between security and performance. High-fidelity safety models, such as Llama Guard, provide essential filtering but add significant latency—often exceeding 500 milliseconds per prompt. In high-frequency agentic workflows, this delay is often unacceptable. This has created a surge in demand for containerized immuno-defenses that operate in the sub-50ms range, yet these solutions have not yet reached the market scale required for global enterprise deployment.

Compounding this technical friction is a severe engineering talent deficit. AI Security Engineers are currently the most difficult roles to fill globally. While the majority of organizations are prioritizing AI-skilled hires, there is a distinct bottleneck at the expert level. Companies are finding that traditional cybersecurity expertise does not perfectly translate to the nuances of LLM safety, leading to siloed defenses where threat signals are not shared across the enterprise portfolio. This lack of a unified defense allows attackers to spray variations of an injection across different AI products within the same company.

Economic Impact and the Regulatory Catalyst

The financial stakes of failing to secure AI infrastructure are immense. Research indicates that prompt injection-driven breaches result in incident response costs that are twice as high as traditional data breaches. In the United States, AI-related incidents are frequently surpassing the $10M mark when accounting for regulatory fines and the lag in detection. Conversely, the ROI for enterprises that successfully harden their AI systems is substantial, with many reporting a 250% return within 18 months due to increased operational stability and consumer trust.

Regulation is also forcing the hand of the C-suite. The EU AI Act has established a clear deadline for compliance, requiring mandatory cybersecurity audits and documented conformity assessments for high-risk AI systems. Non-compliance is no longer a minor setback; it carries the risk of fines reaching up to 7% of global turnover. This regulatory shift has effectively transformed prompt injection defense from a technical best practice into a legal survival requirement for any firm operating on a global scale.

Andres’ Masterclass: The Big Picture

From my perspective in the strategy room, the conversation around prompt injection needs to move away from simple input filtering and toward a comprehensive AI Security Posture Management (AI-SPM) framework. The businesses that will thrive in this era are those that treat AI security as a core component of their tech stack, rather than an afterthought. We are seeing a clear divergence between companies that are delaying AI rollouts due to fear and those that are aggressively investing in hardened, proprietary gateways. The latter are building a competitive moat that is not just about the quality of their AI, but the resilience of their entire digital ecosystem.

We must recognize that the goal is not to eliminate risk entirely—which is impossible in a dynamic environment—but to manage it with surgical precision. This involves implementing in-context defenses and defensive distillation techniques that protect the model without crippling the user experience. As the market rewards unified visibility across the LLM supply chain, the strategic focus must remain on building a shared threat intelligence layer. In the long term, the winners will be the organizations that can automate their defense as effectively as they have automated their productivity.

Securing the Future of Autonomous Intelligence

The evolution of prompt injection defense is a testament to the maturity of the AI market. As we move toward more autonomous and integrated systems, the ability to protect the integrity of the prompt will define the boundary between innovation and liability. Business leaders must view AI security as a strategic enabler that allows for bolder experimentation and faster scaling. By addressing the latency-security paradox and investing in specialized talent, the enterprise can turn a significant vulnerability into a robust foundation for growth.

Navigating the intersection of generative search and operational efficiency requires more than just tools—it requires a roadmap. If you’re ready to evolve your strategy through specialized SEO, GEO, Adavanced Hosting Environments, or AI-driven automation, connect with Andres at Andres SEO Expert. Let’s build a future-proof foundation for your business together.