Executive Summary

- Agentic Orchestration: The transition from static RAG to autonomous loops allows multi-agent systems to cross-reference internal ERP data with real-time market signals for iterative reasoning.

- Inference Efficiency: Strategic value is now measured by the ability to fine-tune Small Language Models (SLMs) on proprietary telemetry, achieving a 90/10 split between low-cost local processing and high-reasoning frontier models.

- Algorithmic Explainability: Regulatory shifts mandate a move away from black-box models toward Explainable AI (XAI) architectures that provide a verifiable audit trail for every derived business insight.

The Evolution of Decision-Making in the Intelligence Era

The traditional reliance on executive intuition is undergoing a fundamental transformation. In the current 2026 market landscape, the competitive advantage has shifted from those who possess the most data to those who can distill that data into high-fidelity, actionable insights at the lowest marginal cost. This transition is not merely a technical upgrade; it is a structural reorganization of how business logic is applied to vast, unstructured data sets. The bifurcation of the market between generalist frontier labs and vertical intelligence sovereigns has created a new mandate for leadership: the integration of agentic frameworks that move beyond simple query-response patterns into autonomous reasoning loops.

The Bifurcation of the AI Ecosystem

The current environment is characterized by a clear split in how intelligence is deployed. On one side, generalist models from major providers offer broad reasoning capabilities. On the other, specialized sovereigns are emerging to dominate specific sectors like legal and biotech. For the enterprise, the strategic challenge lies in navigating this landscape without becoming locked into a single ecosystem. We are seeing a significant surge in the adoption of modular frameworks that allow for private deployments. This modularity is essential for maintaining data sovereignty while leveraging the reasoning power of large-scale models.

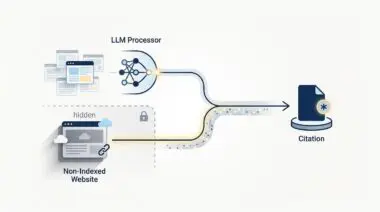

The Rise of Agentic RAG and Autonomous Loops

The technical architecture of insight has evolved from basic Retrieval-Augmented Generation (RAG) to what is now known as Agentic RAG. While traditional RAG simply retrieved documents to ground a model’s response, Agentic RAG utilizes multi-agent systems to perform iterative reasoning. These agents do not just find information; they evaluate its relevance, cross-reference it with internal ERP data, and adjust their search parameters based on intermediate findings. This creates a closed-loop system where the transition from intuition to insight is grounded in factual corporate history rather than probabilistic hallucination.

The Infrastructure of Insight: From Cloud to Edge

To manage the dual pressures of latency and data security, a hybrid inference model has become the industry standard. High-intensity training and complex reasoning tasks are still handled by centralized clusters, but real-time insights are increasingly processed on-device or via edge nodes. This shift minimizes data egress costs and mitigates the security risks associated with sending sensitive proprietary data to external APIs. The deployment of Small Language Models (SLMs) on local infrastructure allows firms to maintain a proprietary data moat while achieving significant gains in inference efficiency.

Generative Engine Optimization for Internal Knowledge

A critical component of this infrastructure is the move from traditional search to Generative Engine Optimization (GEO) within the enterprise. Organizations are now deploying semantic knowledge graphs that serve as the backbone for their AI agents. By structuring internal data as a graph rather than a flat file system, companies ensure that their AI systems understand the relationships between different business units, historical decisions, and market outcomes. This semantic layer is what allows a system to move from simply providing information to providing true strategic insight.

Relying on legacy intuition in a high-speed market is like navigating a dense fog with a paper map; AI-driven insight is the equivalent of a heads-up display that synthesizes radar, satellite, and thermal data into a single, clear path forward.

The Economics of the Insight Gap

Despite the availability of advanced models, many organizations struggle with the insight gap—the space between having data and being able to act on it. This gap is often caused by dirty data and the high latency of legacy systems. In sectors like banking and insurance, the primary bottleneck is the API-fication of legacy mainframes. AI agents can reason with incredible speed, but they are often throttled by the middleware required to interact with older systems. Addressing this last mile problem is essential for realizing the projected 22 to 30 percent reduction in operational expenses that autonomous analytical workflows promise.

Measuring the Cost-per-Insight

As AI becomes a core utility, the benchmark for success is the Cost-per-Insight (CPI). High-reasoning frontier models are expensive to run at scale, leading to inference budget creep. To maintain margins, successful enterprises are adopting a 90/10 split: 90 percent of routine analytical tasks are handled by low-cost SLMs, while only the most complex 10 percent are escalated to high-reasoning models. This tiered approach ensures that the cost of generating an insight does not outpace the value that insight provides to the business.

Regulatory Mandates and Algorithmic Explainability

The shift toward AI-driven insight is also being shaped by a new regulatory environment. Modern mandates now require algorithmic explainability for any AI-driven decision that affects human capital or financial standing. This has acted as a catalyst for the development of Explainable AI (XAI). It is no longer enough for a model to provide a correct answer; it must also provide a verifiable audit trail of how that answer was derived. This requirement is forcing a move away from black-box intuition models toward architectures that prioritize transparency and auditability as core technical requirements.

Andres’ Masterclass: The Big Picture

From my perspective, the transition from intuition to insight is the single most important shift in corporate strategy since the dawn of the internet. However, many leaders are focusing on the wrong metrics. They are chasing model size when they should be focusing on data orchestration and inference efficiency. The real winners in this space will not be the companies with the most expensive AI subscriptions, but those who have built the most robust proprietary data moats. Your data is your only sustainable competitive advantage in an era where intelligence itself is becoming a commodity.

We must view AI not as a replacement for human judgment, but as a high-fidelity filter that removes the noise from the decision-making process. The goal is to move from a human-in-the-loop model to a human-on-the-loop model, where executives spend less time gathering and cleaning data and more time making high-stakes strategic choices based on the insights provided by autonomous systems. Scalability in this new era is defined by how well you can automate the analytical mid-tier of your organization while maintaining a clear, auditable path from data to decision.

Future-Proofing the Insight Engine

Navigating the intersection of generative search and operational efficiency requires more than just tools—it requires a roadmap. If you’re ready to evolve your strategy through specialized SEO, GEO, Advanced Hosting Environments, or AI-driven automation, connect with Andres at Andres SEO Expert. Let’s build a future-proof foundation for your business together.