Executive Summary

- Decouples request-response cycles to enable non-blocking execution of high-latency tasks.

- Critical for managing LLM inference times and large-scale programmatic SEO data pipelines.

- Utilizes message brokers and webhooks to maintain system stability under heavy computational loads.

What is Asynchronous Processing?

Asynchronous processing is a design pattern in software architecture where a task is initiated without requiring the calling thread or process to wait for its completion. In the context of AI automations and web development, this decouples the execution of a function from the immediate request-response cycle. Instead of blocking the system while a long-running operation—such as an LLM generating a 2,000-word article—finishes, the system acknowledges the request, provides a tracking identifier, and continues processing other tasks.

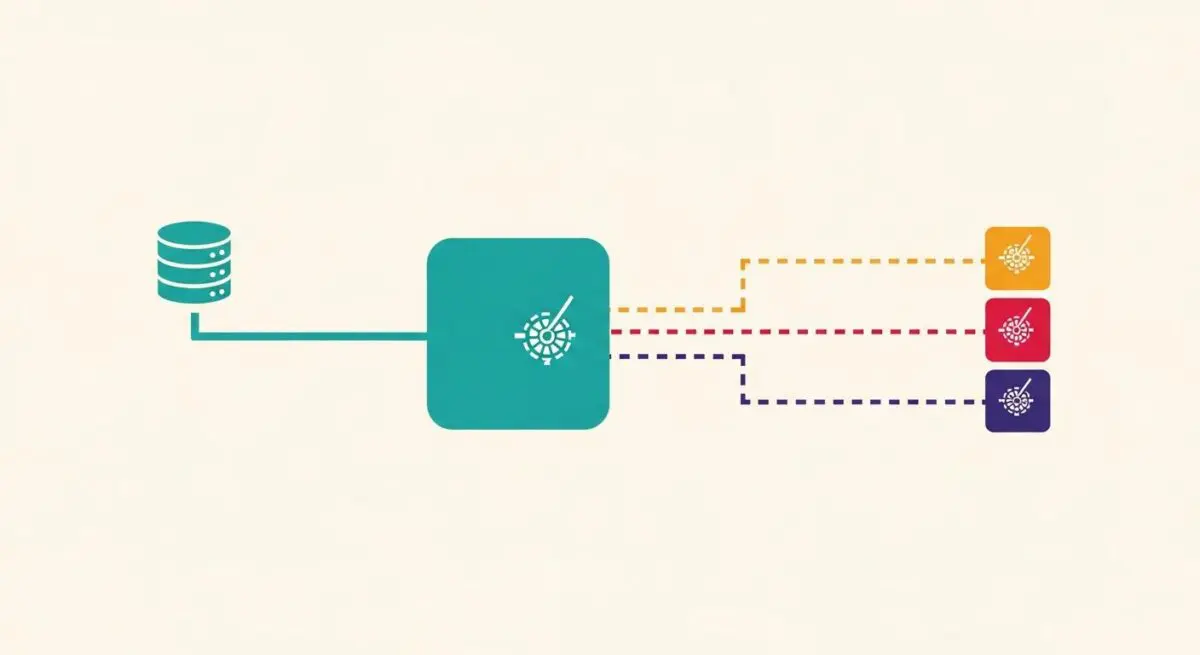

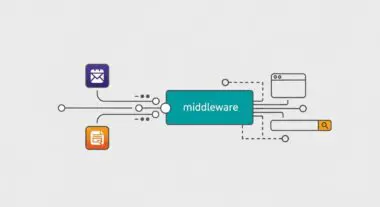

This mechanism typically relies on message brokers (e.g., Redis, RabbitMQ) and task queues (e.g., Celery, BullMQ). When a request enters the system, it is placed into a queue. A worker process then consumes the task from the queue independently. Once the task is finalized, the system communicates the result back to the original requester, often via a webhook or by updating a database state that the client polls intermittently. This architecture is fundamental for building resilient, stateless automation systems that can handle unpredictable workloads without timing out.

The Real-World Analogy

Imagine a high-end restaurant. In a synchronous model, you would place your order at the counter and stand there, blocking the line, until the chef finishes cooking your meal. No one else can order until you receive your plate. In an asynchronous model, you place your order, the cashier gives you a vibrating pager, and you go sit at your table to check your emails. The kitchen works on your meal in the background. When the meal is ready, your pager vibrates (the webhook), and you collect your food. This allows the restaurant to handle dozens of customers simultaneously without the line ever stopping.

Why is Asynchronous Processing Critical for Autonomous Workflows and AI Content Ops?

For AI content operations, latency is the primary bottleneck. Large Language Models (LLMs) often require several seconds or even minutes to process complex prompts. If an automation workflow operates synchronously, the connection between the server and the API will likely time out, leading to data loss and broken pipelines. Asynchronous processing allows for horizontal scaling; you can spin up multiple worker instances to process a backlog of content generation tasks in parallel without affecting the performance of the user interface or the primary application logic.

Furthermore, it is essential for stateless automation. By offloading heavy computational tasks to background workers, the main application remains lightweight and responsive. This is particularly vital for programmatic SEO, where thousands of pages may need to be generated, optimized, and indexed. Asynchronous patterns ensure that if one task fails, it does not crash the entire sequence, allowing for granular error handling and automated retries.

Best Practices & Implementation

- Implement Idempotency: Ensure that if a task is accidentally processed twice, the outcome remains the same. Use unique idempotency keys for every API request to prevent duplicate content generation.

- Leverage Webhooks for Callbacks: Instead of forcing the client to poll the server for updates, use webhooks to push data to the destination once the asynchronous task is complete.

- Monitor Queue Depth: Use monitoring tools to track the number of pending tasks. High queue depth indicates a need for more worker resources to maintain throughput.

- Use Exponential Backoff: When an asynchronous task fails due to rate limits or API downtime, implement a retry strategy that waits progressively longer between attempts.

Common Mistakes to Avoid

A frequent error is the failure to implement proper dead-letter queues (DLQ). When a task fails repeatedly, it should be moved to a DLQ for manual inspection rather than clogging the main processing pipeline. Another mistake is ignoring race conditions; if multiple workers attempt to update the same database record simultaneously without proper locking mechanisms, data integrity can be compromised.

Conclusion

Asynchronous processing is the structural backbone of scalable AI automation, enabling systems to handle high-latency tasks with resilience and efficiency. By decoupling execution from the request cycle, organizations can build robust data pipelines capable of supporting massive programmatic content operations.