Executive Summary

- Concurrency refers to a system’s ability to manage multiple instruction sequences in overlapping time periods, optimizing resource throughput.

- In web performance, concurrency is vital for reducing Time to First Byte (TTFB) and managing high-volume traffic without linear latency increases.

- Modern protocols like HTTP/2 and HTTP/3 utilize concurrency via multiplexing to prevent head-of-line blocking and accelerate resource delivery.

What is Concurrency?

Concurrency is a fundamental concept in computer science and web performance engineering that describes the ability of a system to process multiple tasks or requests in overlapping time intervals. It is distinct from parallelism; while parallelism involves the simultaneous execution of tasks on multiple processors, concurrency is about the efficient management and interleaving of tasks to maximize the utilization of available resources. In a web environment, this means a server or browser can initiate, progress, and complete multiple operations without waiting for each to finish sequentially.

Technical implementation of concurrency typically involves non-blocking I/O operations and asynchronous execution models. For instance, a concurrent web server can handle an incoming HTTP request, initiate a file system read, and while waiting for the data to return, accept another incoming connection. This prevents the CPU from remaining idle during high-latency I/O operations, which is critical for maintaining high throughput in modern, data-intensive web applications.

The Real-World Analogy

Consider a professional chef in a high-end kitchen. If the chef worked sequentially, they would wait for the water to boil before even touching a knife to chop vegetables. In a concurrent kitchen, the chef turns on the stove (starting an I/O task) and immediately begins prepping the garnish. While the garnish is being prepped, they might also sear a protein. The chef is managing multiple threads of production simultaneously, ensuring that the final dish is completed much faster than if each step were performed in isolation, despite the chef being a single resource.

Why is Concurrency Critical for Website Performance and Speed Engineering?

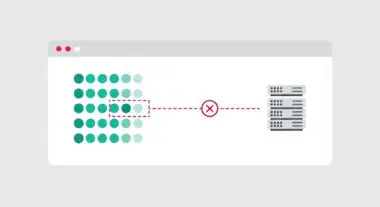

Concurrency is the architectural backbone that enables modern web protocols to overcome the limitations of legacy systems. In the era of HTTP/1.1, browsers were limited in the number of concurrent connections they could open to a single domain, often leading to head-of-line blocking where a single large or slow resource would stall the loading of all subsequent assets. With the advent of HTTP/2 and HTTP/3, concurrency is handled through multiplexing, allowing dozens of resources to be transmitted over a single connection simultaneously. This directly impacts Largest Contentful Paint (LCP) by ensuring that critical render-blocking assets are not queued behind non-essential data.

Furthermore, on the server side, concurrency management is essential for maintaining a stable Time to First Byte (TTFB). By utilizing asynchronous processing and efficient thread pooling, servers can handle thousands of concurrent users without exhausting system memory or causing CPU thrashing. This scalability is vital for enterprise-level hosting where traffic spikes are common and performance consistency is a requirement for both user experience and search engine visibility.

Best Practices & Implementation

- Leverage HTTP/3 Multiplexing: Implement HTTP/3 to utilize its advanced concurrency model, which uses the QUIC protocol to eliminate transport-layer head-of-line blocking.

- Optimize Database Connection Pools: Ensure that your application uses a managed pool of database connections to handle concurrent queries efficiently without the overhead of repeated handshakes.

- Implement Asynchronous I/O: Use non-blocking programming patterns in your backend (e.g., Node.js event loops or Go routines) to prevent I/O-bound tasks from blocking the execution of other requests.

- Tune Worker Pools: Fine-tune server configurations (such as Nginx worker_processes or PHP-FPM pm.max_children) to match the hardware’s CPU and RAM capacity, avoiding the performance degradation caused by excessive context switching.

- Utilize Edge Computing: Offload concurrent request handling to the network edge using CDNs, which can distribute the processing load across a global network of servers.

Common Mistakes to Avoid

A frequent error in speed engineering is unbounded concurrency, where a system attempts to handle too many simultaneous tasks, leading to resource exhaustion and eventual system failure. Another common pitfall is ignoring shared resource contention; when multiple concurrent processes attempt to modify the same data without proper locking mechanisms, it can lead to data corruption or inconsistent application states. Finally, many brands fail to monitor queue depth, focusing only on active concurrent requests while ignoring the backlog of tasks waiting for resources, which hides latent performance issues.

Conclusion

Concurrency is a critical mechanism for optimizing resource utilization and minimizing latency in modern web environments. By mastering concurrent task execution and leveraging advanced protocols, web performance architects can ensure high-speed delivery and scalability for complex digital ecosystems.