Executive Summary

- Top-P Sampling, also known as Nucleus Sampling, is a stochastic decoding strategy that selects tokens from a dynamic subset of the probability distribution based on a cumulative threshold.

- Unlike Top-K sampling, Top-P allows the selection pool to expand or contract based on the model’s confidence, ensuring linguistic fluidity while maintaining semantic coherence.

- Optimizing Top-P values is a critical component of Generative Engine Optimization (GEO) to balance creative brand expression with factual accuracy in AI-generated outputs.

What is Top-P Sampling?

Top-P Sampling, frequently referred to as Nucleus Sampling, is a sophisticated technique used in Large Language Models (LLMs) to control the randomness and diversity of generated text. During the inference phase, an LLM generates a probability distribution for the next potential token in a sequence. Instead of selecting from a fixed number of top candidates—as seen in Top-K sampling—Top-P selects the smallest set of tokens whose cumulative probability mass reaches a predefined threshold p (e.g., 0.9 or 90%).

The primary technical advantage of this approach is its dynamic nature. In scenarios where the model is highly confident about the next word, the “nucleus” of tokens is small, which significantly reduces the risk of generating gibberish or irrelevant content. Conversely, when the model is less certain, the pool expands to include more diverse options, preventing the output from becoming repetitive or overly generic. This mechanism ensures that the model avoids the “long tail” of low-probability tokens that typically lead to incoherence in long-form generation.

The Real-World Analogy

Imagine you are a scout for a professional sports team. Instead of always looking at exactly the top 10 players available (Top-K), you decide to look at all players who collectively hold 90% of the league’s total talent rating (Top-P). In a high-talent year, your pool might only include 5 superstars because they represent the bulk of the value. In a year where talent is spread thin, your pool might expand to 20 players to ensure you aren’t missing anyone viable. Top-P allows the AI to be as selective or as broad as the context requires, ensuring it only considers “qualified” candidates for the next word.

Why is Top-P Sampling Important for GEO and LLMs?

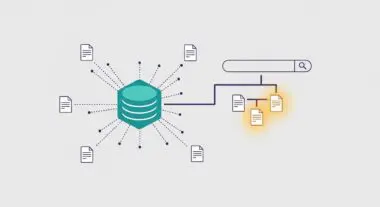

For Generative Engine Optimization (GEO), Top-P sampling directly influences how a brand’s information is synthesized and presented by AI agents. A lower Top-P value results in more deterministic and factual output, which is essential for maintaining technical accuracy and brand authority in Retrieval-Augmented Generation (RAG) systems. If an AI search engine utilizes an excessively high Top-P value when summarizing your content, it may introduce creative variations that inadvertently alter the technical meaning or nuance of your data.

Furthermore, understanding Top-P helps SEO and AI-Search professionals predict how LLMs might paraphrase their content. Content that is structured with high semantic clarity tends to result in a more concentrated probability distribution. This makes it more likely that the AI will select the most accurate tokens during the sampling process, thereby preserving the original intent, factual integrity, and source attribution of the material within the generative response.

Best Practices & Implementation

- Calibrate for Intent: Set Top-P to lower values (0.1 – 0.4) for technical documentation, financial data, and factual Q&A to ensure high precision and minimize hallucinations.

- Enable Creative Fluidity: Utilize moderate Top-P values (0.7 – 0.9) for marketing copy or blog content to allow for natural linguistic variation without losing the logical narrative thread.

- Isolate Parameters: Coordinate Top-P with Temperature settings; it is generally recommended to adjust one or the other, as both influence the entropy of the output distribution, and adjusting both simultaneously can make output behavior unpredictable.

- RAG Evaluation: Test different Top-P thresholds during the RAG evaluation phase to determine which setting best preserves the specific terminology and nomenclature of your industry niche.

Common Mistakes to Avoid

One frequent error is setting Top-P to 1.0 (effectively disabling the filter) while simultaneously using a high Temperature setting; this combination often leads to incoherent, “hallucinated,” or non-sensical content. Another mistake is applying a static Top-P value across all content types; technical specifications require much tighter constraints than creative storytelling to maintain brand safety and factual reliability.

Conclusion

Top-P Sampling is a vital mechanism for balancing linguistic creativity with factual grounding in LLMs. Mastering its application is essential for ensuring brand accuracy and maximizing visibility within the evolving landscape of AI-driven search and Generative Engine Optimization.