Key Points

- Entity Nesting: Individual Review schema must contain a nested itemReviewed property with a defined type to maintain entity context for search engines.

- Cache Invalidation: Persistent object caches like Redis must be flushed via WP-CLI to prevent stale aggregateRating data from overriding database updates.

- Validation: Use cURL commands and inspect X-Cache headers to confirm that Googlebot receives the updated JSON-LD payload without CDN minification interference.

Table of Contents

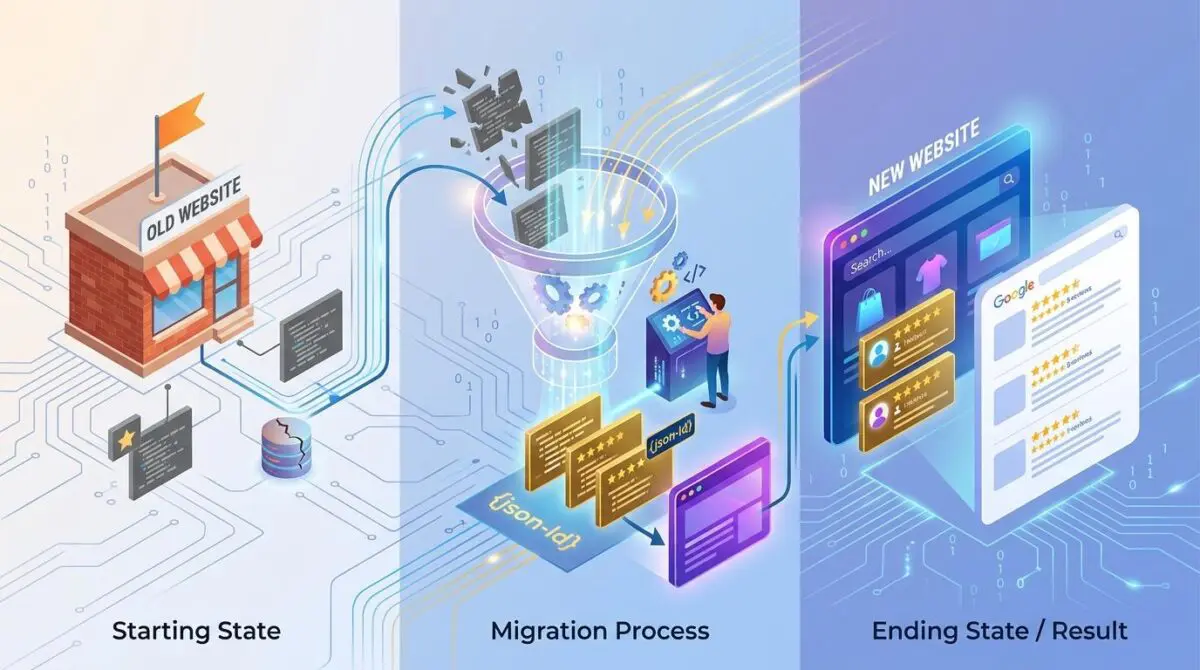

The Core Conflict: Schema Migration Failures

A recent technical SEO study by Milestone Research reveals that sites effectively implementing Review snippet rich results experience a 20% to 25% higher click-through rate compared to standard blue link results. Losing this enhancement during a site migration can devastate organic traffic and user acquisition metrics. This catastrophic drop often occurs when migrating from aggregateRating to individual Review schema.

Review snippet rich results are enhanced search listings that display star ratings and summary info from individual reviews or aggregate ratings. When you transition your structured data, the search engine’s rendering engine must re-evaluate the itemReviewed property. The engine needs this context to ensure the entity is still eligible for rich results.

If the individual Review markup lacks a globally unique identifier or a clear connection to a supported schema type, the snippet is suppressed. You will typically see a sudden critical drop in valid items within the Google Search Console enhancement report. Server logs will show increased Googlebot-Mobile activity without a corresponding update in the SERP display.

Diagnostic Checkpoints: Identifying the Disconnect

When review snippets vanish, the issue is almost always a desynchronization within your technical stack. The parser loses the entity context, or the server delivers stale payload data to the crawler. Identifying the exact layer of failure is critical before pushing code changes.

Diagnostic Checkpoints

Entity Nesting Conflict

Reviews require explicitly nested itemReviewed types for entity context.

JSON-LD Serialization Errors

Invalid JSON syntax breaks script parsing during V8 rendering.

Stale Object Cache Persistence

Persistent transients serve old schema after backend migration updates.

Property Discontinuity

Missing mapping between legacy ratingValue and new reviewRating properties.

At the WordPress layer, entity nesting conflicts often occur when switching from a generic SEO plugin to a specialized review plugin. The new plugin might fail to hook into the primary query metadata. This leaves the reviews floating as disconnected data points in the DOM.

At the server layer, stale object cache persistence is a frequent culprit in modern environments using Redis or Memcached. The database might reflect the new individual Review schema, but persistent transients continue injecting the legacy aggregateRating data. This forces Googlebot’s V8 rendering engine to process invalid or conflicting script blocks.

Engineering Resolution Roadmap

Restoring your rich results requires a methodical approach to schema validation and cache invalidation. You must ensure that Googlebot receives a perfectly serialized, context-rich JSON-LD payload. Follow these sequential steps to resolve the schema conflicts.

Engineering Resolution Roadmap

Audit and Map itemReviewed

Verify that every ‘Review’ type includes a nested ‘itemReviewed’ property with a defined ‘@type’ (e.g., Product). Use the JSON-LD @id property to link the review to the main entity on the page.

Flush Persistent Transients

Use WP-CLI command ‘wp transient delete –all’ and flush the Redis/Memcached instance to ensure the server-side output reflects the new schema structure immediately.

Enforce Canonical IDs

Modify the functions.php to ensure that both the main entity and the reviews share a common @id (typically the permalink) to prevent Google from seeing them as disconnected data points.

Trigger Indexing API

Send a POST request to the Google Indexing API for the affected URLs to force a re-crawl of the metadata outside of the standard crawl cycle.

Auditing the itemReviewed property is the most critical phase of this roadmap. Every single review object must explicitly declare what it is reviewing. Linking the review to the main entity via a shared identifier prevents the parser from discarding the data.

Flushing persistent transients ensures that your server-side output matches your database state. Bypassing the standard crawl cycle via the Indexing API accelerates recovery. This forces Google to re-evaluate the metadata immediately rather than waiting for organic discovery.

Code Implementations for Schema Integrity

Executing the resolution requires precise modifications to your application and server environments. The following configurations enforce correct entity nesting and prevent aggressive caching during the migration phase. Implement these selectively based on your stack.

Fixing via functions.php

This filter hooks into the schema generation process to force the correct itemReviewed nesting. It ensures that the required type and name properties are dynamically populated from the post data.

/* WordPress (functions.php): Force correct itemReviewed nesting */

add_filter('wpseo_schema_review', function($data) {

if (isset($data['itemReviewed'])) {

$data['itemReviewed']['@type'] = 'Product';

$data['itemReviewed']['name'] = get_the_title();

}

return $data;

});Fixing via NGINX

This server block directive prevents the caching of schema-heavy pages during the migration window. It forces proxy servers and browsers to request a fresh copy of the HTML payload.

/* NGINX: Prevent caching of schema-heavy pages during migration */

location ~* \.(html|php)$ {

add_header Cache-Control 'no-store, no-cache, must-revalidate, proxy-revalidate, max-age=0';

if_modified_since off;

expires off;

etag off;

}Fixing via Apache

This configuration utilizes the headers module to append a temporary availability tag. It signals to search engines exactly how to handle the indexing parameters during the transition.

/* Apache: Set X-Robots-Tag to ensure quick re-indexing */

<IfModule mod_headers.c>

Header set X-Robots-Tag 'unavailable_after: 21-Jul-2025 15:00:00 PST'

</IfModule>Validation Protocol and Edge Cases

Deploying the code is only half the battle. You must rigorously validate the payload delivery to ensure Googlebot can successfully parse the new structure. Relying solely on local browser testing is insufficient.

Validation Protocol

- Run URL through Google Rich Results Test to verify enhancements.

- Execute curl command to verify Review count in raw source.

- Inspect X-Cache headers for cache MISS or BYPASS status.

- Perform GSC Live Test to confirm smartphone bot parsing success.

Even with perfect code, edge cases can suppress your rich results. A common conflict involves Cloudflare Edge Workers configured for aggressive HTML minification. These scripts can inadvertently strip out LD+JSON script tags entirely.

Alternatively, they might remove vital whitespace that the legacy parser requires. This results in an unparsable structured data error in Search Console. Always verify the raw source code bypassing the CDN to rule out edge-level interference.

Autonomous Monitoring and Prevention

Preventing future schema regressions requires shifting from reactive troubleshooting to proactive monitoring. Implementing a continuous integration pipeline step that runs a headless browser check against the Rich Results Test API is highly recommended. This ensures no invalid schema reaches production.

You should also monitor server log files for excessive status codes from Googlebot. Complex schema rendering can exhaust your crawl budget if the parser gets stuck in a loop. Automated log analysis tools are essential for tracking how often your API endpoints are accessed for schema data.

At Andres SEO Expert, we engineer advanced automation pipelines to monitor entity integrity at the enterprise level. Leveraging custom API alerts ensures that desynchronizations are caught before they impact organic visibility. This level of architectural oversight is critical for maintaining complex rich result profiles.

Conclusion

Restoring lost review snippets requires a surgical approach to structured data and server cache management. By enforcing strict entity nesting and validating payload delivery, you can rapidly recover your SERP enhancements. Always monitor your server logs to ensure search engines process your updates efficiently.

Navigating the intersection of technical SEO, server architecture, and generative search requires a precise roadmap. If you need to future-proof your enterprise stack, resolve deep-level crawl anomalies, or implement AI-driven SEO automation, connect with Andres at Andres SEO Expert.