Key Points

- Algorithmic Suppression: Google’s ranking engine has decoupled technical syntax validity from UI eligibility, suppressing rich results based on domain E-E-A-T thresholds.

- WRS Timeouts: JavaScript-deferred schema injection exceeds the Web Rendering Service timeout window, necessitating immediate server-side payload injection.

- DOM Mismatches: Content hidden via CSS or interactive JavaScript accordions triggers silent suppression by the Helpful Content classifier due to user-visibility policies.

The Core Conflict: Syntax Validity vs. Algorithmic Eligibility

In the contemporary search ecosystem, one of the most perplexing anomalies an SEO engineer can encounter is the silent suppression of structured data. Specifically, the FAQ Structured Data Rich Result Eligibility error manifests as a near-total collapse of FAQ impressions in the Google Search Console Performance Report, despite the Enhancements report confirming perfect syntax validation. This creates a highly deceptive diagnostic environment where the JSON-LD or Microdata is technically flawless, yet the visual rich result is entirely absent from the Search Engine Results Pages.

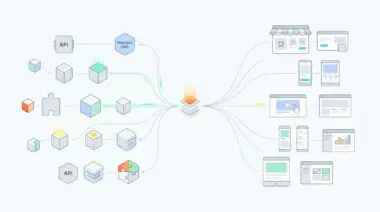

To understand this failure state, we must examine how Googlebot and the Web Rendering Service process structured data following recent core updates. Historically, technical validity guaranteed visual eligibility. Today, the indexing pipeline has decoupled parsing from rendering. Googlebot continues to ingest your FAQ schema to construct its Knowledge Graph and train internal Large Language Models, fulfilling its Generative Engine Optimization requirements. However, the ranking engine will decline to allocate the pixels required for the expandable FAQ accordion if the domain fails to meet strict E-E-A-T thresholds. This silent failure state means your markup is successfully indexed, utilized for data provenance, but visually discarded, severely impacting organic click-through rates.

Diagnostic Checkpoints: Identifying the Desynchronization

When investigating a discrepancy between technical validation and visual rendering, the root cause typically resides in a desynchronization between the server payload, the rendering queue, and algorithmic classifiers. The following checkpoints isolate the specific layer of failure.

Diagnostic Checkpoints

Algorithmic Authority Deprecation

E-E-A-T score below threshold suppresses valid schema UI.

WRS Rendering Timeout

JS-deferred schema exceeds Googlebot rendering timeout window.

Content-Markup Mismatch (Hidden Content)

Hidden content discrepancies cause silent snippet suppression.

The first potential point of failure is Algorithmic Authority Deprecation. Post-August 2023, Google explicitly restricted FAQ snippets to highly authoritative entities, such as government domains or established global brands. If your WordPress architecture relies on automated FAQ generators pulling from low-authority custom post types, or if the domain has triggered a Helpful Content Update classifier, the ranking engine will suppress the UI element entirely. The schema remains valid in the JSON-LD validator, but the domain lacks the trust score required for visual execution.

The second failure vector occurs at the rendering layer. Google’s Web Rendering Service operates with a finite computational window. If your FAQ schema is injected via a client-side script or a heavy schema plugin utilizing late-stage JavaScript execution, you risk a WRS Rendering Timeout. In headless WordPress configurations or environments utilizing aggressive CSS optimization plugins, the scripts responsible for schema injection may be deferred. Consequently, Googlebot indexes the initial HTML payload before the JSON-LD is fully rendered into the Document Object Model, creating a critical mismatch between the raw crawl and the rendered view.

The third vector involves Content-Markup Mismatches. Google’s strict structured data guidelines mandate that marked-up content must be immediately visible to the user. Many modern WordPress themes utilize complex JavaScript accordions or CSS rules to hide FAQ answers until a user interaction event occurs. If the JSON-LD contains the full text, but the HTML DOM at initial load does not, algorithmic classifiers will flag the schema as manipulative, resulting in immediate, silent suppression.

The Engineering Resolution: Forcing Server-Side Synchronization

Resolving this anomaly requires bypassing client-side rendering dependencies and forcing the structured data into the initial server response. This ensures the schema is parsed during the initial HTTP request, circumventing the Web Rendering Service queue entirely.

Engineering Resolution Roadmap

Audit Search Appearance Performance

Navigate to GSC > Performance > Search Results. Filter by ‘Search Appearance’ and look for ‘FAQ snippets’. If the line chart shows a vertical drop to zero but ‘Valid’ counts in the Enhancements tab remain stable, the issue is algorithmic eligibility, not technical syntax.

Move Schema to Server-Side Injection

Bypass JavaScript-based schema injection. Hard-code the JSON-LD into the header of your WordPress template files (header.php) or use a filter to inject it directly into the initial HTML payload to ensure the WRS captures it in the first 14KB of data.

Verify DOM Visibility

Open Chrome DevTools, disable JavaScript, and check if the FAQ text is still visible. If the text disappears, it is likely hidden via JS-based accordions. Refactor the CSS/HTML to ensure the content is present in the source code using standard ARIA attributes for visibility.

The primary objective is to guarantee that the JSON-LD payload is present within the first fourteen kilobytes of the HTML document. Due to Transmission Control Protocol slow start mechanisms, Googlebot processes the initial bytes of a page instantly. By hard-coding the schema into the header of your template files, you eliminate the risk of JavaScript deferral or WRS timeouts. Furthermore, auditing the Search Appearance performance data isolates whether the issue is algorithmic or technical. A sudden, vertical drop to zero impressions while enhancement validations remain stable is the definitive signature of an algorithmic suppression, whereas intermittent drops suggest rendering timeouts.

Equally critical is the verification of DOM visibility. Engineers must utilize browser developer tools to disable JavaScript execution completely and audit the raw HTML response. If the FAQ text disappears or is obscured by CSS rules, the frontend architecture must be refactored. Implementing standard ARIA attributes and ensuring the text is present in the unrendered source code aligns the visual presentation with the structured data payload, satisfying the visibility requirements of the Helpful Content classifier.

Code Implementations: Bypassing the Render Queue

The following technical implementations provide robust methods for injecting structured data directly from the server, ensuring immediate availability to Googlebot’s parsing engine without relying on client-side execution.

Fixing via WordPress Functions

This implementation hooks directly into the WordPress header sequence, bypassing heavy schema plugins. By extracting the FAQ metadata and encoding it directly into the initial HTML payload, we guarantee the schema is available before any JavaScript is executed.

function inject_faq_schema_directly() {

if (is_single() && get_post_meta(get_the_ID(), 'faq_data', true)) {

$faq_meta = get_post_meta(get_the_ID(), 'faq_data', true);

echo '<script type="application/ld+json">' . json_encode($faq_meta) . '</script>';

}

}

add_action('wp_head', 'inject_faq_schema_directly', 1);Fixing via NGINX Configuration

In architectures where schema might be served or validated as standalone JSON files, it is imperative to ensure Googlebot is explicitly permitted to crawl and index these assets. This NGINX directive appends the necessary HTTP headers to prevent accidental crawl blocks.

location ~* \.(jsonld|json)$ {

add_header X-Robots-Tag "index, follow";

access_log off;

}Fixing via Apache Configuration

For environments utilizing Apache, the following directives enforce the same indexing permissions at the server level, ensuring that any externalized structured data dependencies are fully accessible to the crawling infrastructure.

<FilesMatch "\.(jsonld|json)$">

Header set X-Robots-Tag "index, follow"

</FilesMatch>Validation Protocol and Edge Case Scenarios

Once the server-side injection is deployed, rigorous validation is required to confirm that the desynchronization has been resolved. Standard validation tools must be augmented with manual server response analysis.

Validation Protocol

- Confirm URL is eligible via the Rich Results Test tool.

- Verify ‘FAQPage’ string exists in GSC Live Test HTML.

- Ensure X-Robots-Tag does not serve ‘noindex’ via curl -I.

- Validate that JSON-LD isn’t blocked by Content Security Policy (CSP).

Beyond standard validation, engineers must account for complex edge cases within the modern delivery stack. A frequent, yet rarely diagnosed, issue occurs at the Content Delivery Network layer. When utilizing Cloudflare Edge Workers for aggressive HTML minification, poorly configured regular expressions may inadvertently strip script tags designated as application/ld+json to reduce the overall payload size. Alternatively, these workers might relocate the script tags to the footer, pushing them outside Googlebot’s initial parsing buffer and reintroducing the WRS timeout risk.

Another critical edge case involves caching layers such as Varnish. If the caching proxy misconfigures the forwarded protocol headers, the server-side injection may output HTTP URLs within the JSON-LD payload while the canonical page is served over HTTPS. This creates a strict canonical mismatch within the entity graph, causing Google’s validation engine to reject the schema entirely, resulting in the continued suppression of the rich result.

Autonomous Monitoring and Enterprise Prevention

Resolving the immediate error is only the first phase of enterprise entity management. To prevent future schema degradation, infrastructure must be monitored autonomously. Relying on manual Google Search Console audits is insufficient for large-scale architectures. Engineers should implement automated data pipelines utilizing the Google Search Console API to extract daily search appearance impressions specifically filtered for FAQ snippets. By routing this data into a centralized data warehouse, anomaly detection algorithms can trigger immediate alerts the moment visual eligibility drops, long before organic traffic metrics reflect the failure.

Furthermore, continuous log-file analysis is mandatory. Parsing server access logs to verify that Googlebot is consistently returning status codes indicating successful retrieval of structured data-heavy pages ensures the crawling pipeline remains unobstructed. Combining API-driven impression monitoring with granular log analysis creates a robust, proactive defense against algorithmic shifts and rendering timeouts, securing the integrity of your generative search visibility.

Conclusion

The suppression of technically valid FAQ structured data represents a fundamental shift in how search engines allocate rendering resources. By migrating schema injection to the server side, ensuring strict DOM visibility, and monitoring the infrastructure autonomously, engineers can restore rich result eligibility and protect their entity graph.

Navigating the intersection of technical SEO, server architecture, and generative search requires a precise roadmap. If you need to future-proof your enterprise stack, resolve deep-level crawl anomalies, or implement AI-driven SEO automation, connect with Andres at Andres SEO Expert.